[20250514]truncare table相关数据段的确定与恢复.txt --//这几天一直在做truncare table,drop table的非常规恢复,通过修改obj$表的DATAOBJ#指向原来的数据段号,然后通过rowid扫描 --//的方式收集数据。该方式最大的缺点就是恢复很慢,原始的脚本定义行号是999,而且如果扫描数据块范围很大的情况下,真是很慢. --//我第一次尝试没有修改脚本使用行号999,后面修改150才快了不少。 --//如果通过redo分析,确定扫描范围,这样通过bbed进一步确定每块的最大行号,这样就可以大大加快恢复进度。 --//本文尝试如何通过分析redo的转储,确定drop table的相关数据段的占用数据块的范围。 --//我看了以前的笔记,[20181210]truncate的另外恢复4.txt,通过修改段头,恢复原来的信息,这样难度太大,应该很容易分析redo --//的转储获得相关信息。 1.环境: SCOTT@book01p> @ver2 ============================== PORT_STRING : x86_64/Linux 2.4.xx VERSION : 21.0.0.0.0 BANNER : Oracle Database 21c Enterprise Edition Release 21.0.0.0.0 - Production BANNER_FULL : Oracle Database 21c Enterprise Edition Release 21.0.0.0.0 - Production Version 21.3.0.0.0 BANNER_LEGACY : Oracle Database 21c Enterprise Edition Release 21.0.0.0.0 - Production CON_ID : 0 PL/SQL procedure successfully completed. ALTER TABLESPACE USERS ADD DATAFILE '/u01/oradata/BOOK/book01p/users02.dbf' SIZE 100M AUTOEXTEND ON NEXT 4M MAXSIZE UNLIMITED; --//表空间users增加1个数据文件,比较符合许多生产系统的情况。 2.测试环境建立: SCOTT@book01p> create table t7 as select * from all_objects; Table created. --//分析略。 SCOTT@book01p> create unique index pk_t7 on t7 (object_id); Index created. SCOTT@book01p> alter table t7 add constraint pk_t7 primary key (object_id); Table altered. SCOTT@book01p> create index i_t7_OBJECT_NAME on t7(OBJECT_NAME); Index created. --//建立一些索引比较符合实际的情况。 3.先转储该表的数据段头: SCOTT@book01p> @ seg2 t7 '' SCOTT@book01p> @ pr ============================== SEG_MB : 12 SEG_OWNER : SCOTT SEG_SEGMENT_NAME : T7 SEG_PARTITION_NAME : SEG_SEGMENT_TYPE : TABLE SEG_TABLESPACE_NAME : USERS BLOCKS : 1536 HDRFIL : 12 HDRBLK : 170 PL/SQL procedure successfully completed. SCOTT@book01p> alter system dump datafile 12 block 170; System altered. --//查看转储: Extent Control Header ----------------------------------------------------------------- Extent Header:: spare1: 0 spare2: 0 #extents: 27 #blocks: 1536 last map 0x00000000 #maps: 0 offset: 2716 Highwater:: 0x04800363 ext#: 26 blk#: 99 ext size: 128 #blocks in seg. hdr's freelists: 0 #blocks below: 1507 mapblk 0x00000000 offset: 26 Unlocked -------------------------------------------------------- Low HighWater Mark : Highwater:: 0x04800363 ext#: 26 blk#: 99 ext size: 128 #blocks in seg. hdr's freelists: 0 #blocks below: 1507 mapblk 0x00000000 offset: 26 Level 1 BMB for High HWM block: 0x04800301 Level 1 BMB for Low HWM block: 0x04800301 -------------------------------------------------------- Segment Type: 1 nl2: 1 blksz: 8192 fbsz: 0 L2 Array start offset: 0x00001434 First Level 3 BMB: 0x00000000 L2 Hint for inserts: 0x030000a9 Last Level 1 BMB: 0x04800301 Last Level II BMB: 0x030000a9 Last Level III BMB: 0x00000000 Map Header:: next 0x00000000 #extents: 27 obj#: 129070 flag: 0x10000000 Inc # 0 Extent Map ----------------------------------------------------------------- 0x030000a8 length: 8 --//0x030000a8 = set dba 12,168 = alter system dump datafile 12 block 168 = 50331816 0x030000b0 length: 8 0x030000b8 length: 8 0x030000c0 length: 8 0x030000c8 length: 8 0x030000d0 length: 8 0x030000d8 length: 8 0x030000e0 length: 8 0x030000e8 length: 8 0x030000f0 length: 8 0x030000f8 length: 8 0x03006000 length: 8 0x03006008 length: 8 0x03006010 length: 8 0x03006018 length: 8 0x03006020 length: 8 0x04800080 length: 128 0x03000100 length: 128 0x04800100 length: 128 0x03000180 length: 128 0x04800180 length: 128 0x03000200 length: 128 0x04800200 length: 128 0x03000280 length: 128 0x04800280 length: 128 0x03000300 length: 128 0x04800300 length: 128 Auxillary Map -------------------------------------------------------- Extent 0 : L1 dba: 0x030000a8 Data dba: 0x030000ab Extent 1 : L1 dba: 0x030000a8 Data dba: 0x030000b0 Extent 2 : L1 dba: 0x030000b8 Data dba: 0x030000b9 Extent 3 : L1 dba: 0x030000b8 Data dba: 0x030000c0 Extent 4 : L1 dba: 0x030000c8 Data dba: 0x030000c9 Extent 5 : L1 dba: 0x030000c8 Data dba: 0x030000d0 Extent 6 : L1 dba: 0x030000d8 Data dba: 0x030000d9 Extent 7 : L1 dba: 0x030000d8 Data dba: 0x030000e0 Extent 8 : L1 dba: 0x030000e8 Data dba: 0x030000e9 Extent 9 : L1 dba: 0x030000e8 Data dba: 0x030000f0 Extent 10 : L1 dba: 0x030000f8 Data dba: 0x030000f9 Extent 11 : L1 dba: 0x030000f8 Data dba: 0x03006000 Extent 12 : L1 dba: 0x03006008 Data dba: 0x03006009 Extent 13 : L1 dba: 0x03006008 Data dba: 0x03006010 Extent 14 : L1 dba: 0x03006018 Data dba: 0x03006019 Extent 15 : L1 dba: 0x03006018 Data dba: 0x03006020 Extent 16 : L1 dba: 0x04800080 Data dba: 0x04800082 Extent 17 : L1 dba: 0x03000100 Data dba: 0x03000102 Extent 18 : L1 dba: 0x04800100 Data dba: 0x04800102 Extent 19 : L1 dba: 0x03000180 Data dba: 0x03000182 Extent 20 : L1 dba: 0x04800180 Data dba: 0x04800182 Extent 21 : L1 dba: 0x03000200 Data dba: 0x03000202 Extent 22 : L1 dba: 0x04800200 Data dba: 0x04800202 Extent 23 : L1 dba: 0x03000280 Data dba: 0x03000282 Extent 24 : L1 dba: 0x04800280 Data dba: 0x04800282 Extent 25 : L1 dba: 0x03000300 Data dba: 0x03000302 Extent 26 : L1 dba: 0x04800300 Data dba: 0x04800302 -------------------------------------------------------- SCOTT@book01p> column PARTITION_NAME noprint SCOTT@book01p> select * from dba_extents where segment_name='T7' order by EXTENT_ID; OWNER SEGMENT_NAME SEGMENT_TYPE TABLESPACE_NAME EXTENT_ID FILE_ID BLOCK_ID BYTES BLOCKS RELATIVE_FNO ----- ------------ ------------ --------------- ---------- ---------- ---------- ---------- ---------- ------------ SCOTT T7 TABLE USERS 0 12 168 65536 8 12 SCOTT T7 TABLE USERS 1 12 176 65536 8 12 SCOTT T7 TABLE USERS 2 12 184 65536 8 12 SCOTT T7 TABLE USERS 3 12 192 65536 8 12 SCOTT T7 TABLE USERS 4 12 200 65536 8 12 SCOTT T7 TABLE USERS 5 12 208 65536 8 12 SCOTT T7 TABLE USERS 6 12 216 65536 8 12 SCOTT T7 TABLE USERS 7 12 224 65536 8 12 SCOTT T7 TABLE USERS 8 12 232 65536 8 12 SCOTT T7 TABLE USERS 9 12 240 65536 8 12 SCOTT T7 TABLE USERS 10 12 248 65536 8 12 SCOTT T7 TABLE USERS 11 12 24576 65536 8 12 SCOTT T7 TABLE USERS 12 12 24584 65536 8 12 SCOTT T7 TABLE USERS 13 12 24592 65536 8 12 SCOTT T7 TABLE USERS 14 12 24600 65536 8 12 SCOTT T7 TABLE USERS 15 12 24608 65536 8 12 SCOTT T7 TABLE USERS 16 18 128 1048576 128 18 SCOTT T7 TABLE USERS 17 12 256 1048576 128 12 SCOTT T7 TABLE USERS 18 18 256 1048576 128 18 SCOTT T7 TABLE USERS 19 12 384 1048576 128 12 SCOTT T7 TABLE USERS 20 18 384 1048576 128 18 SCOTT T7 TABLE USERS 21 12 512 1048576 128 12 SCOTT T7 TABLE USERS 22 18 512 1048576 128 18 SCOTT T7 TABLE USERS 23 12 640 1048576 128 12 SCOTT T7 TABLE USERS 24 18 640 1048576 128 18 SCOTT T7 TABLE USERS 25 12 768 1048576 128 12 SCOTT T7 TABLE USERS 26 18 768 1048576 128 18 27 rows selected. --//EXTENT_ID 0-15使用的数据文件12,EXTENT_ID=16开始使用数据文件18,然后相互交叉使用。 --//实际上Extent Map的信息与查询dba_extents视图结果一致。 --//理论讲扫描范围是 Data dba 到 对应Extent Map看到的dba + length -1. --//注:表太小,没有看到L2 dba的情况。 4.看看truncate table的情况: $ cat tr.txt column member new_value v_member column member noprint set numw 12 pause run alter system archive log current or alter system switch logfile; --//12c不允许在pluggable database执行这条命令 --//alter system archive log current; set termout off SELECT member FROM v$log a, v$logfile b WHERE a.group#(+) = b.group# and a.STATUS='CURRENT' and rownum=1; set termout on column curr1 new_value v_curr1 select current_scn curr1 from v$database; --//以下操作内容: truncate table t7 ; --//以上操作内容: column curr2 new_value v_curr2 select current_scn curr2 from v$database; prompt exec DBMS_LOGMNR.START_LOGMNR(STARTSCN => &&v_curr1 ,ENDSCN => &&v_curr2 ,OPTIONS => DBMS_LOGMNR.DICT_FROM_ONLINE_CATALOG + DBMS_LOGMNR.CONTINUOUS_MINE); prompt alter system dump logfile '&&v_member' scn min &&v_curr1 scn max &&v_curr2; @ti alter system dump logfile '&&v_member' scn min &&v_curr1 scn max &&v_curr2; SCOTT@book01p> @ tr.txt run alter system archive log current or alter system switch logfile CURR1 ------------ 41657823 Table truncated. CURR2 ------------ 41657925 exec DBMS_LOGMNR.START_LOGMNR(STARTSCN => 41657823 ,ENDSCN => 41657925 ,OPTIONS => DBMS_LOGMNR.DICT_FROM_ONLINE_CATALOG + DBMS_LOGMNR.CONTINUOUS_MINE) alter system dump logfile '/u01/oradata/BOOK/redo03.log' scn min 41657823 scn max 41657925 New tracefile_identifier = /u01/app/oracle/diag/rdbms/book/book/trace/book_ora_3783_0003.trc System altered. 5.尝试恢复: --//分析转储: $ grep -i "ADD: dba" /u01/app/oracle/diag/rdbms/book/book/trace/book_ora_3783_0003.trc | tail -27 ADD: dba:0x3006038 len:8 at offset:1 ADD: dba:0x4800300 len:128 at offset:26 ADD: dba:0x3000300 len:128 at offset:25 ADD: dba:0x4800280 len:128 at offset:24 ADD: dba:0x3000280 len:128 at offset:23 ADD: dba:0x4800200 len:128 at offset:22 ADD: dba:0x3000200 len:128 at offset:21 ADD: dba:0x4800180 len:128 at offset:20 ADD: dba:0x3000180 len:128 at offset:19 ADD: dba:0x4800100 len:128 at offset:18 ADD: dba:0x3000100 len:128 at offset:17 ADD: dba:0x4800080 len:128 at offset:16 ADD: dba:0x3006020 len:8 at offset:15 ADD: dba:0x3006018 len:8 at offset:14 ADD: dba:0x3006010 len:8 at offset:13 ADD: dba:0x3006008 len:8 at offset:12 ADD: dba:0x3006000 len:8 at offset:11 ADD: dba:0x30000f8 len:8 at offset:10 ADD: dba:0x30000f0 len:8 at offset:9 ADD: dba:0x30000e8 len:8 at offset:8 ADD: dba:0x30000e0 len:8 at offset:7 ADD: dba:0x30000d8 len:8 at offset:6 ADD: dba:0x30000d0 len:8 at offset:5 ADD: dba:0x30000c8 len:8 at offset:4 ADD: dba:0x30000c0 len:8 at offset:3 ADD: dba:0x30000b8 len:8 at offset:2 ADD: dba:0x30000b0 len:8 at offset:1 --//反过来看与Extent Map信息对上。offset:0第1项没有删除。 --//也可以看出大致操作流程,先回收索引,然后最后才是表。加入tail -27,看到最后的操作就是表都段头的undo信息。 $ grep -i "ADDAXT:" /u01/app/oracle/diag/rdbms/book/book/trace/book_ora_3783_0003.trc | tail -27 ADDAXT: offset:1 fdba:x03006030 bdba:0x03006038 ADDAXT: offset:26 fdba:x04800300 bdba:0x04800302 ADDAXT: offset:25 fdba:x03000300 bdba:0x03000302 ADDAXT: offset:24 fdba:x04800280 bdba:0x04800282 ADDAXT: offset:23 fdba:x03000280 bdba:0x03000282 ADDAXT: offset:22 fdba:x04800200 bdba:0x04800202 ADDAXT: offset:21 fdba:x03000200 bdba:0x03000202 ADDAXT: offset:20 fdba:x04800180 bdba:0x04800182 ADDAXT: offset:19 fdba:x03000180 bdba:0x03000182 ADDAXT: offset:18 fdba:x04800100 bdba:0x04800102 ADDAXT: offset:17 fdba:x03000100 bdba:0x03000102 ADDAXT: offset:16 fdba:x04800080 bdba:0x04800082 ADDAXT: offset:15 fdba:x03006018 bdba:0x03006020 ADDAXT: offset:14 fdba:x03006018 bdba:0x03006019 ADDAXT: offset:13 fdba:x03006008 bdba:0x03006010 ADDAXT: offset:12 fdba:x03006008 bdba:0x03006009 ADDAXT: offset:11 fdba:x030000f8 bdba:0x03006000 ADDAXT: offset:10 fdba:x030000f8 bdba:0x030000f9 ADDAXT: offset:9 fdba:x030000e8 bdba:0x030000f0 ADDAXT: offset:8 fdba:x030000e8 bdba:0x030000e9 ADDAXT: offset:7 fdba:x030000d8 bdba:0x030000e0 ADDAXT: offset:6 fdba:x030000d8 bdba:0x030000d9 ADDAXT: offset:5 fdba:x030000c8 bdba:0x030000d0 ADDAXT: offset:4 fdba:x030000c8 bdba:0x030000c9 ADDAXT: offset:3 fdba:x030000b8 bdba:0x030000c0 ADDAXT: offset:2 fdba:x030000b8 bdba:0x030000b9 ADDAXT: offset:1 fdba:x030000a8 bdba:0x030000b0 --//反过来看与Auxillary Map信息对上。fdba对上的就是L1 dba。 ddba 对上Data dba。 --//AXT 可以理解为 Auxillary Table。 --//oracle redo转储输出还有点小问题,fdba的输出,前面的16进制少1个0. $ egrep "ADD: dba|ADDAXT:" /u01/app/oracle/diag/rdbms/book/book/trace/book_ora_3783_0003.trc | tail -52 | paste - - ADD: dba:0x4800300 len:128 at offset:26 ADDAXT: offset:26 fdba:x04800300 bdba:0x04800302 ADD: dba:0x3000300 len:128 at offset:25 ADDAXT: offset:25 fdba:x03000300 bdba:0x03000302 ADD: dba:0x4800280 len:128 at offset:24 ADDAXT: offset:24 fdba:x04800280 bdba:0x04800282 ADD: dba:0x3000280 len:128 at offset:23 ADDAXT: offset:23 fdba:x03000280 bdba:0x03000282 ADD: dba:0x4800200 len:128 at offset:22 ADDAXT: offset:22 fdba:x04800200 bdba:0x04800202 ADD: dba:0x3000200 len:128 at offset:21 ADDAXT: offset:21 fdba:x03000200 bdba:0x03000202 ADD: dba:0x4800180 len:128 at offset:20 ADDAXT: offset:20 fdba:x04800180 bdba:0x04800182 ADD: dba:0x3000180 len:128 at offset:19 ADDAXT: offset:19 fdba:x03000180 bdba:0x03000182 ADD: dba:0x4800100 len:128 at offset:18 ADDAXT: offset:18 fdba:x04800100 bdba:0x04800102 ADD: dba:0x3000100 len:128 at offset:17 ADDAXT: offset:17 fdba:x03000100 bdba:0x03000102 ADD: dba:0x4800080 len:128 at offset:16 ADDAXT: offset:16 fdba:x04800080 bdba:0x04800082 ADD: dba:0x3006020 len:8 at offset:15 ADDAXT: offset:15 fdba:x03006018 bdba:0x03006020 ADD: dba:0x3006018 len:8 at offset:14 ADDAXT: offset:14 fdba:x03006018 bdba:0x03006019 ADD: dba:0x3006010 len:8 at offset:13 ADDAXT: offset:13 fdba:x03006008 bdba:0x03006010 ADD: dba:0x3006008 len:8 at offset:12 ADDAXT: offset:12 fdba:x03006008 bdba:0x03006009 ADD: dba:0x3006000 len:8 at offset:11 ADDAXT: offset:11 fdba:x030000f8 bdba:0x03006000 ADD: dba:0x30000f8 len:8 at offset:10 ADDAXT: offset:10 fdba:x030000f8 bdba:0x030000f9 ADD: dba:0x30000f0 len:8 at offset:9 ADDAXT: offset:9 fdba:x030000e8 bdba:0x030000f0 ADD: dba:0x30000e8 len:8 at offset:8 ADDAXT: offset:8 fdba:x030000e8 bdba:0x030000e9 ADD: dba:0x30000e0 len:8 at offset:7 ADDAXT: offset:7 fdba:x030000d8 bdba:0x030000e0 ADD: dba:0x30000d8 len:8 at offset:6 ADDAXT: offset:6 fdba:x030000d8 bdba:0x030000d9 ADD: dba:0x30000d0 len:8 at offset:5 ADDAXT: offset:5 fdba:x030000c8 bdba:0x030000d0 ADD: dba:0x30000c8 len:8 at offset:4 ADDAXT: offset:4 fdba:x030000c8 bdba:0x030000c9 ADD: dba:0x30000c0 len:8 at offset:3 ADDAXT: offset:3 fdba:x030000b8 bdba:0x030000c0 ADD: dba:0x30000b8 len:8 at offset:2 ADDAXT: offset:2 fdba:x030000b8 bdba:0x030000b9 ADD: dba:0x30000b0 len:8 at offset:1 ADDAXT: offset:1 fdba:x030000a8 bdba:0x030000b0 --//这样扫描范围很容易确定:bdba:0x04800302 到 dba:0x4800300 + len:128 -1 --//后记:实际上还可以直接扫描dba:0x4800300 到 dba:0x4800300 + len:128 -1 的范围,这样简单许多。 $ egrep "ADD: dba|ADDAXT:" /u01/app/oracle/diag/rdbms/book/book/trace/book_ora_3783_0003.trc | tail -52 | paste - - | \ awk '{print substr($9,6),substr($2,5),substr($3,5) }' | tac 0x030000b0 0x30000b0 8 0x030000b9 0x30000b8 8 0x030000c0 0x30000c0 8 0x030000c9 0x30000c8 8 0x030000d0 0x30000d0 8 0x030000d9 0x30000d8 8 0x030000e0 0x30000e0 8 0x030000e9 0x30000e8 8 0x030000f0 0x30000f0 8 0x030000f9 0x30000f8 8 0x03006000 0x3006000 8 0x03006009 0x3006008 8 0x03006010 0x3006010 8 0x03006019 0x3006018 8 0x03006020 0x3006020 8 0x04800082 0x4800080 128 0x03000102 0x3000100 128 0x04800102 0x4800100 128 0x03000182 0x3000180 128 0x04800182 0x4800180 128 0x03000202 0x3000200 128 0x04800202 0x4800200 128 0x03000282 0x3000280 128 0x04800282 0x4800280 128 0x03000302 0x3000300 128 0x04800302 0x4800300 128 --//确定扫描范围: $ egrep "ADD: dba|ADDAXT:" /u01/app/oracle/diag/rdbms/book/book/trace/book_ora_3783_0003.trc | tail -52 | paste - - | \ awk '{printf( "%s 0x%x\n", substr($9,6),strtonum(substr($2,5))+substr($3,5)-1 ) }'| tac 0x030000b0 0x30000b7 0x030000b9 0x30000bf 0x030000c0 0x30000c7 0x030000c9 0x30000cf 0x030000d0 0x30000d7 0x030000d9 0x30000df 0x030000e0 0x30000e7 0x030000e9 0x30000ef 0x030000f0 0x30000f7 0x030000f9 0x30000ff 0x03006000 0x3006007 0x03006009 0x300600f 0x03006010 0x3006017 0x03006019 0x300601f 0x03006020 0x3006027 0x04800082 0x48000ff 0x03000102 0x300017f 0x04800102 0x480017f 0x03000182 0x30001ff 0x04800182 0x48001ff 0x03000202 0x300027f 0x04800202 0x480027f 0x03000282 0x30002ff 0x04800282 0x48002ff 0x03000302 0x300037f 0x04800302 0x480037f --//保存为xxx1.txt $ awk '{print "p /d " $1 ">>22\n","p /d " $1 "& 0x3ffff\n","p /d " $2 "& 0x3ffff" }' xxx1.txt | gdb | grep " = " | awk '{print $NF}' | paste -d" " - - - 12 176 183 12 185 191 12 192 199 12 201 207 12 208 215 12 217 223 12 224 231 12 233 239 12 240 247 12 249 255 12 24576 24583 12 24585 24591 12 24592 24599 12 24601 24607 12 24608 24615 18 130 255 12 258 383 18 258 383 12 386 511 18 386 511 12 514 639 18 514 639 12 642 767 18 642 767 12 770 895 18 770 895 --//简单解析: >>22 相当于移位22位取到文件号, & 0x3ffff 相当于位与,取后22位取到数据块号。 --//第一行解析dba = 12,176开始扫描到12,183。 --//加上EXTENT_ID=0的情况,就是整个扫描范围,加上 12 170 175 --//补充: 事后整理发现,直接使用awk 取证,取模更简单一些。 $ awk '{print int(strtonum($1)/2^22),int(strtonum($1)%2^22),int(strtonum($2)%2^22)}' xxx1.txt 12 176 183 12 185 191 12 192 199 12 201 207 12 208 215 12 217 223 12 224 231 12 233 239 12 240 247 12 249 255 12 24576 24583 12 24585 24591 12 24592 24599 12 24601 24607 12 24608 24615 18 130 255 12 258 383 18 258 383 12 386 511 18 386 511 12 514 639 18 514 639 12 642 767 18 642 767 12 770 895 18 770 895 SCOTT@book01p> select * from dba_extents where segment_name='T7' order by EXTENT_ID; OWNER SEGMENT_NAME SEGMENT_TYPE TABLESPACE_NAME EXTENT_ID FILE_ID BLOCK_ID BYTES BLOCKS RELATIVE_FNO ----- ------------ ------------------ --------------- ---------- ---------- ---------- ---------- ---------- ------------ SCOTT T7 TABLE USERS 0 12 168 65536 8 12 $ awk '{print "p /d " $1 ">>22\n","p /d " $1 "& 0x3ffff\n","p /d " $2 "& 0x3ffff" }' xxx1.txt | gdb | grep " = " | awk '{print $NF}' | paste -d" " - - - > xxx2.txt --//保存为xxx2.txt,并且加入 12 170 175 SYS@book01p> @ versions obj$ OBJ#,DATAOBJ#,name,mtime s '' '' "versions_operation='U' and name='T7'" VERSIONS_STARTTIME VERSIONS_ENDTIME VERSIONS_STARTSCN VERSIONS_ENDSCN VERSIONS_XID V ROWID OBJ# DATAOBJ# NAME MTIME -------------------- -------------------- ----------------- --------------- ---------------- - ------------------ ---------- ---------- ---- ------------------- 2025-05-14 09:20:58. 2025-05-14 09:21:04. 41644065 41644101 07000300A81F0000 U AAAAASAABAAAI6JAAA 129070 129070 T7 2025-05-14 09:20:59 2025-05-14 09:21:04. 2025-05-14 09:22:10. 41644101 41644327 02000700B31F0000 U AAAAASAABAAAI6JAAA 129070 129070 T7 2025-05-14 09:21:07 2025-05-14 09:22:10. 2025-05-14 09:59:47. 41644327 41657861 06001F005B1F0000 U AAAAASAABAAAI6JAAA 129070 129070 T7 2025-05-14 09:22:12 2025-05-14 09:59:47. 41657861 05001200AE1F0000 U AAAAASAABAAAI6JAAA 129070 129562 T7 2025-05-14 09:59:50 --//建立索引mtime发生变化。初始T7的DATAOBJ#=129070. --//执行如下出现问题: $ head -1 xxx2.txt | xargs -IQ ./finddoid.sh Q 129070 $ ps -ef ... oracle 9789 3096 0 15:56 pts/3 00:00:00 xargs -IQ ./finddoid.sh Q 129070 oracle 9790 9789 0 15:56 pts/3 00:00:00 /bin/bash ./finddoid.sh 12 170 175 129070 oracle 9792 9790 0 15:56 pts/3 00:00:00 /bin/seq -f %-1.0f 129070 oracle 9793 9790 3 15:56 pts/3 00:00:00 xargs -IQ echo -e host echo -n 12 170 175,Q \np /d dba 12 170 175,Q ktbbh.ktbbhsid.ktbbhod1 oracle 9794 9790 0 15:56 pts/3 00:00:00 /bin/bash ./finddoid.sh 12 170 175 129070 .... --//实际上将12 170 175完整的当作1个参数,解析错误。 --//修改如下才是正确的执行方式: $ head -1 xxx2.txt | xargs -IQ echo ./finddoid.sh Q 129070 | bash 129070 max_row = 66 scan result in 129070_scan.txt , scan max rows num result in 129070_max_rowsnum.txt --//$ >| 129070_scan.txt --//$ >| 129070_max_rowsnum.txt $ cat xxx2.txt | xargs -IQ echo ./finddoid.sh Q 129070 | bash ... scan range file# = 18 begin_block =770 end_block = 895 129070 max_row = 60 scan result in 129070_scan.txt , scan max rows num result in 129070_max_rowsnum.txt --//注:开始忘记修改filelist.txt的bbed配置文件加入,遗漏这部分数据块的扫描。 --// 18 /u01/oradata/BOOK/book01p/users02.dbf $ paste -d"," 129070_scan.txt 129070_max_rowsnum.txt | xargs -IQ echo " insert into scanblock values ( Q ); " > xxx3.txt --//使用t7_bak保存rowid扫描的数据记录。 SCOTT@book01p> create table t7_bak tablespace tsp_audit as select * from t7 where 0=1; Table created. SCOTT@book01p> create table scanblock ( file_id number,block_id number,rowsnum number ) tablespace TSP_AUDIT; Table created. --//执行xxx3.txt脚本,不要忘记提交。 SYS@book01p> update (select OBJ#,DATAOBJ#,OWNER#,NAME from obj$ where obj#=129070) set DATAOBJ#=129070; 1 row updated. SYS@book01p> commit ; Commit complete. SYS@book01p> alter system flush shared_pool; System altered. SYS@book01p> set timing on SYS@book01p> @ txt/truncTz.txt SCOTT T7 SCOTT T7_BAK PL/SQL procedure successfully completed. Elapsed: 00:00:03.08 SYS@book01p> set timing off --//可以发现这样执行很快完成。 COTT@book01p> select count(*) from t7_bak; COUNT(*) ---------- 69894 SCOTT@book01p> @ tab2 t7 Show tables matching condition "t7" (if schema is not specified then current user's tables only are shown)... OWNER TABLE_NAME TYPE NUM_ROWS BLOCKS EMPTY AVGSPC ROWLEN TAB_LAST_ANALYZED DEGREE COMPRESS ----- ---------- ---- ------------ ------------- --------- ------ ------ ------------------- -------------------- -------- SCOTT T7 TAB 69894 1507 0 0 145 2025-05-14 09:22:54 1 DISABLED --//原来的统计信息没有清除,说明恢复完成正确。 6.补充取字段2,字段3测试看看。 $ egrep "ADD: dba|ADDAXT:" /u01/app/oracle/diag/rdbms/book/book/trace/book_ora_3783_0003.trc | tail -52 | paste - - | awk '{print substr($2,5),substr($3,5) }' | tac > yyy1.txt $ awk '{print "p /d " $1 ">>22\n","p /d " $1 "& 0x3ffff\n","p /d ( " $1 "+" $2 " -1 ) & 0x3ffff" }' yyy1.txt | gdb | grep " = " | awk '{print $NF}' | paste -d" " - - - >| yyy2.txt --//修改加入 12 168 175 $ cat yyy2.txt | xargs -IQ echo ./finddoid.sh Q 129070 | bash $ paste -d"," 129070_scan.txt 129070_max_rowsnum.txt | xargs -IQ echo " insert into scanblock values ( Q ); " >| yyy3.txt $ diff xxx3.txt yyy3.txt --//生成的插入脚本一样说明没有问题,这样仅仅多扫描几个数据块。 7.附上finddoid.sh的代码,再次做了小量修改。 $ cat finddoid.sh #!/bin/bash # argv1=file_number argv2=begin_block argvs3=end_block argv4=data_object_id file_number=$1 begin_block=$2 end_block=$3 data_object_id=$4 # scan begin_block to end_block,define search scope. /bin/rm scan1.txt 2>/dev/null #seq -f "%-1.0f" $begin_block $end_block | xargs -IQ echo -e "host echo $file_number,Q \np /d dba $file_number,Q ktbbh.ktbbhsid.ktbbhod1" | \ #rlbbed | grep "^BBED" | egrep "$file_number,|ub4 ktbbhod1" | awk '{print $NF}' | paste -d" " - - | awk -v a=$data_object_id '$2==a {print $1}' >| scan1.txt /bin/seq -f "%-1.0f" $begin_block $end_block | xargs -IQ echo -e "host echo -n $file_number,Q \np /d dba $file_number,Q ktbbh.ktbbhsid.ktbbhod1" | \ rlbbed | grep -B1 --no-group-separator "ub4 ktbbhod1" | awk '{print $NF}' | paste -d" " - - | awk -v a=$data_object_id '$2==a {print $1}' >| scan1.txt /bin/cat scan1.txt >> ${data_object_id}_scan.txt # scan begin_block to end_block,obtain max row. /bin/rm scan2.txt 2>/dev/null /bin/cat scan1.txt | xargs -IQ echo p dba Q kdbt[0].kdbtnrow | rlbbed | grep kdbtnrow | awk '{print $NF}' >| scan2.txt max_row=$(sort -nr scan2.txt | head -1) /bin/cat scan2.txt >> ${data_object_id}_max_rowsnum.txt echo scan range file# = $file_number begin_block =$begin_block end_block = $end_block , doid = $data_object_id max_row = $max_row echo scan result in ${data_object_id}_scan.txt , scan max rows num result in ${data_object_id}_max_rowsnum.txt echo

[20250514]truncare table相关数据段的确定与恢复.txt

来源:这里教程网

时间:2026-03-03 21:56:56

作者:

编辑推荐:

- 京东敢烧钱做外卖,原来是因为电商赚麻了03-03

- [20250514]truncare table相关数据段的确定与恢复.txt03-03

- [20250515]drop table相关数据段的确定与恢复.txt03-03

- [20250515]删除在Pluggable Database设置的参数.txt03-03

- 深入浅出 Rust 并发:RwLock 与 Mutex 在 Tauri 项目中的实践03-03

- 创新靶点与双机制策略崛起,AACR揭幕ADC治疗新纪元03-03

- Robotaxi新消息密集释放,量产元年来临谁在领跑?03-03

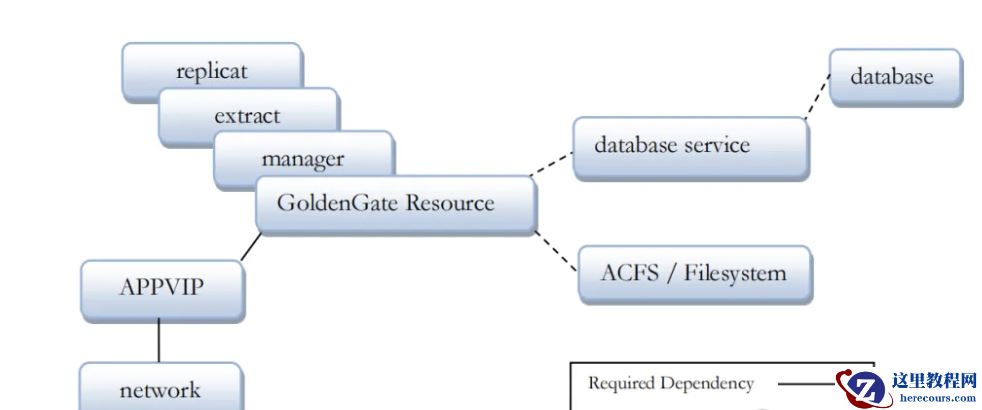

- Oracle集群+ACFS竟让OGG故障率归零,太不可思议了!03-03

下一篇:

相关推荐

-

雷神推出 MIX PRO II 迷你主机:基于 Ultra 200H,玻璃上盖 + ARGB 灯效

2 月 9 日消息,雷神 (THUNDEROBOT) 现已宣布推出基于英

-

制造商 Musnap 推出彩色墨水屏电纸书 Ocean C:支持手写笔、第三方安卓应用

2 月 10 日消息,制造商 Musnap 现已在海外推出一款 Oce

热文推荐

- 京东敢烧钱做外卖,原来是因为电商赚麻了

京东敢烧钱做外卖,原来是因为电商赚麻了

26-03-03 - 深入浅出 Rust 并发:RwLock 与 Mutex 在 Tauri 项目中的实践

- 创新靶点与双机制策略崛起,AACR揭幕ADC治疗新纪元

创新靶点与双机制策略崛起,AACR揭幕ADC治疗新纪元

26-03-03 - Robotaxi新消息密集释放,量产元年来临谁在领跑?

Robotaxi新消息密集释放,量产元年来临谁在领跑?

26-03-03 - Oracle集群+ACFS竟让OGG故障率归零,太不可思议了!

Oracle集群+ACFS竟让OGG故障率归零,太不可思议了!

26-03-03 - 刘强东上街送外卖,美团王兴还睡得着吗?

刘强东上街送外卖,美团王兴还睡得着吗?

26-03-03 - 【KWDB 创作者计划】_KWDB引领数据库技术革新的璀璨之星

【KWDB 创作者计划】_KWDB引领数据库技术革新的璀璨之星

26-03-03 - 全娱乐生态驱动增长,从财报看阿里影业穿越周期的发展韧性

全娱乐生态驱动增长,从财报看阿里影业穿越周期的发展韧性

26-03-03 - 被问能不能不还钱,周鸿祎称360借条“不是我公司”!真相来了

被问能不能不还钱,周鸿祎称360借条“不是我公司”!真相来了

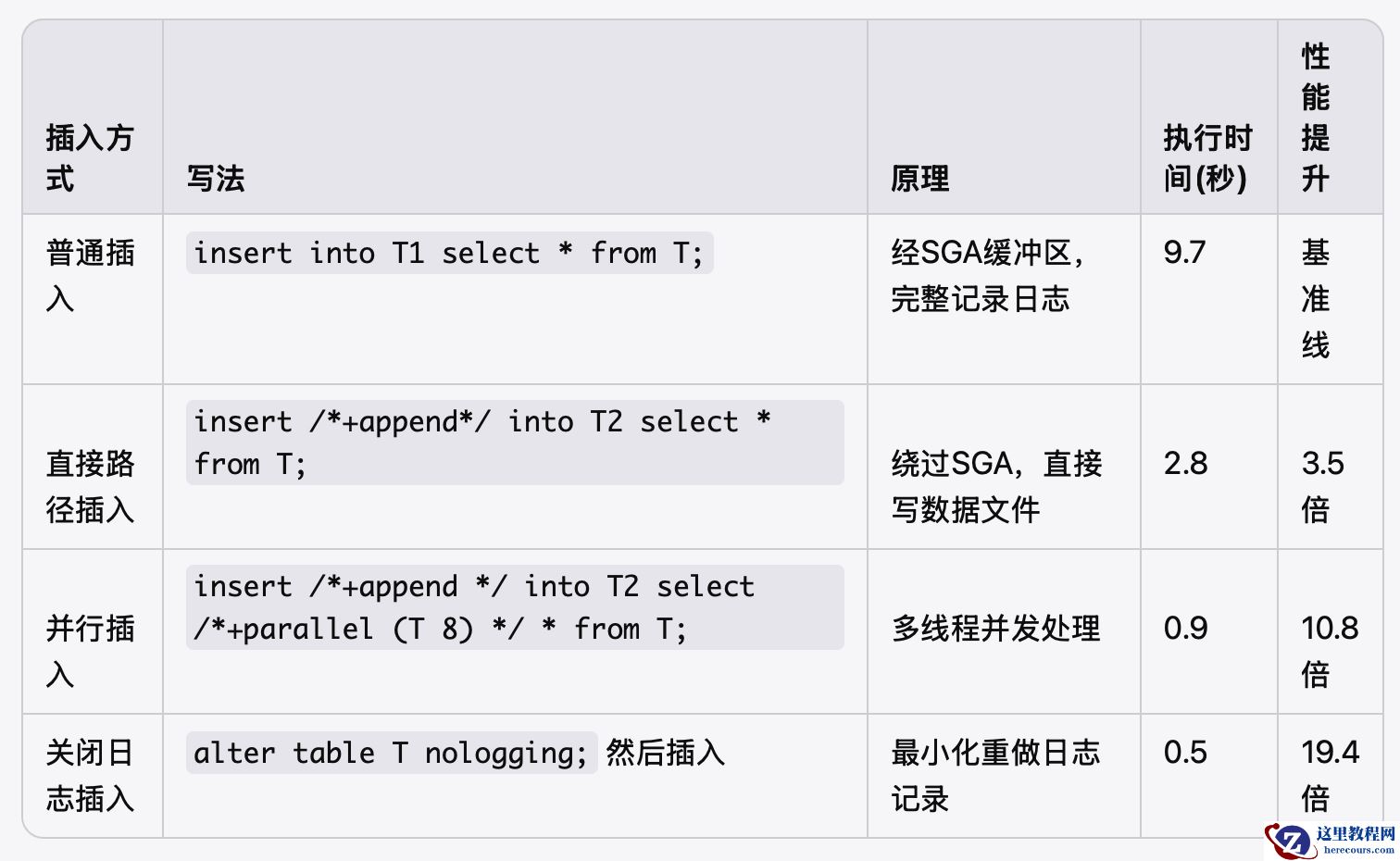

26-03-03 - 通用SQL优化经典等价改写【三】——插入提速

通用SQL优化经典等价改写【三】——插入提速

26-03-03