前言

关系膨胀概述

VACUUM不能解决表膨胀的问题,死元组本身能够被并发

VACUUM机制回收,但它产生的碎片,留下的空洞却不可以。比如,即使删除了许多死元组,也无法减小表的大小。久而久之,关系文件被大量空洞填满,浪费了大量的磁盘空间。VACUUM FULL

命令可以回收这些空间,它将旧表文件中的活元组复制到新表中,通过重写整张表的方式将表压实。但在实际生产中,因为该操作会持有表上的

AccessExclusiveLock,阻塞业务正常访问,因此在不间断服务的情况下并不适用,

pg_repack是一个实用的第三方插件,能够在线上业务正常进行的同时进行无锁的

VACUUM FULL。VACUUM FULL处理膨胀并没有一个最佳实践。DBA需要针对自己的业务场景制定清理策略。但无论采用何种策略,实施这些策略的机制都是类似的:关系膨胀的度量

膨胀率的精确计算

pgstattuple模块,可用于精确计算表的膨胀率。譬如这里的

tuple_percent字段就是元组实际字节占关系总大小的百分比,用1减去该值即为膨胀率。select *, 1.0 - tuple_len::numeric / table_len as bloat

from pgstattuple('pgbench_accounts');

│ table_len │ 136642560 │

│ tuple_count │ 1000000 │

│ tuple_len │ 121000000 │

│ tuple_percent │ 88.55 │

│ dead_tuple_count │ 16418 │

│ dead_tuple_len │ 1986578 │

│ dead_tuple_percent │ 1.45 │

│ free_space │ 1674768 │

│ free_percent │ 1.23 │

│ bloat │ 0.11447794889088729017 │

pgstattuple对于精确地判断表与索引的膨胀情况非常有用,具体细节可以参考官方文档:

https://www.postgresql.org/docs/current/static/pgstattuple.html

。pg_freespacemap与

pageinspect,前者可以用于检视每个页面中的空闲空间大小,后者则可以精确地展示关系中每个数据页内物理存储的内容。如果希望检视关系的内部状态,这两个插件非常实用,详细使用方法可以参考官方文档:pgstattuple插件执行精确的膨胀率估算大致需要5分钟时间。在9.5及后续版本,

pgstattuple插件还提供了

pgstattuple_approx函数,以精度换速度。但即使使用估算,也需要秒级的时间。膨胀率的估算

avgwidth:从列级统计数据计算而来,用于估计紧实状态占用的空间。pg_class.reltuples:用于估计紧实状态占用的空间pg_class.relpages:用于测算实际使用的空间1 - (reltuples * avgwidth) / (block_size - pageheader) / relpages

block_size是页面大小,默认为8182,

pageheader是首部占用的大小,默认为24字节。页面大小减去首部大小就是可以用于元组存储的实际空间,因此

(reltuples * avgwidth)给出了元组的估计总大小,而除以前者后,就可以得到预计需要多少个页面才能紧实地存下所有的元组。最后,期待使用的页面数量,除以实际使用的页面数量,就是

利用率,而1减去利用率,就是膨胀率。难点

计算元组的平均长度

MAXALIGN,通常为8。WITH OIDS选项,元组还会有一个4字节的OID,但这里我们不考虑该情况。avg_size_tuple = 4 + avg_size_hdr + avg_size_data

计算首部的平均长度

normhdrnullhdrnullfracavg_size_hdr = nullhdr * nullfrac + normhdr * (1 - nullfrac)

avg_size_hdr = nullhdr * nullfrac + 24 * (1 - nullfrac)

padding = lambda x : x + 7 >> 3 << 3

计算数据部分的平均长度

SELECT schemaname, tablename, sum((1 - null_frac) * avg_width) FROM pg_stats GROUP BY (schemaname, tablename);

pg_stats系统统计视图中获取

app.apple表上一条元组的平均长度。SELECT count(*), -- 字段数目 ceil(count(*) / 8.0), -- 空值位图占用的字节数 max(null_frac), -- 最大空值率 sum((1 - null_frac) * avg_width) -- 数据部分的平均宽度 FROM pg_stats where schemaname = 'app' and tablename = 'apple'; -[ RECORD 1 ]----------- count | 47 ceil | 6 max | 1 sum | 1733.76873471724

整合

CREATE OR REPLACE FUNCTION public . pg_table_bloat (relation regclass )

RETURNS double precision LANGUAGE plpgsql AS $function$ DECLARE _schemaname text; tuples BIGINT := 0; pages INTEGER := 0; nullheader INTEGER:= 0; nullfrac FLOAT := 0; datawidth INTEGER :=0; avgtuplelen FLOAT :=24; BEGIN SELECT relnamespace :: RegNamespace, reltuples, relpages into _schemaname, tuples, pages FROM pg_class Where oid = relation; SELECT 23 + ceil(count(*) >> 3), max(null_frac), ceil(sum((1 - null_frac) * avg_width)) into nullheader, nullfrac, datawidth FROM pg_stats where schemaname = _schemaname and tablename = relation :: text; SELECT (datawidth + 8 - (CASE WHEN datawidth%8=0 THEN 8 ELSE datawidth%8 END)) -- avg data len + (1 - nullfrac) * 24 + nullfrac * (nullheader + 8 - (CASE WHEN nullheader%8=0 THEN 8 ELSE nullheader%8 END)) INTO avgtuplelen; raise notice '% %', nullfrac, datawidth; RETURN 1 - (ceil(tuples * avgtuplelen / 8168)) / pages; END; $function$

批量计算

DROP VIEW IF EXISTS monitor.pg_bloat_indexes CASCADE;

CREATE OR REPLACE VIEW monitor.pg_bloat_indexes AS

WITH btree_index_atts AS (

SELECT

pg_namespace.nspname,

indexclass.relname AS index_name,

indexclass.reltuples,

indexclass.relpages,

pg_index.indrelid,

pg_index.indexrelid,

indexclass.relam,

tableclass.relname AS tablename,

(regexp_split_to_table((pg_index.indkey) :: TEXT, ' ' :: TEXT)) :: SMALLINT AS attnum,

pg_index.indexrelid AS index_oid

FROM ((((pg_index

JOIN pg_class indexclass ON ((pg_index.indexrelid = indexclass.oid)))

JOIN pg_class tableclass ON ((pg_index.indrelid = tableclass.oid)))

JOIN pg_namespace ON ((pg_namespace.oid = indexclass.relnamespace)))

JOIN pg_am ON ((indexclass.relam = pg_am.oid)))

WHERE ((pg_am.amname = 'btree' :: NAME) AND (indexclass.relpages > 0))

), index_item_sizes AS (

SELECT

ind_atts.nspname,

ind_atts.index_name,

ind_atts.reltuples,

ind_atts.relpages,

ind_atts.relam,

ind_atts.indrelid AS table_oid,

ind_atts.index_oid,

(current_setting('block_size' :: TEXT)) :: NUMERIC AS bs,

8 AS maxalign,

24 AS pagehdr,

CASE

WHEN (max(COALESCE(pg_stats.null_frac, (0) :: REAL)) = (0) :: FLOAT)

THEN 2

ELSE 6

END AS index_tuple_hdr,

sum((((1) :: FLOAT - COALESCE(pg_stats.null_frac, (0) :: REAL)) *

(COALESCE(pg_stats.avg_width, 1024)) :: FLOAT)) AS nulldatawidth

FROM ((pg_attribute

JOIN btree_index_atts ind_atts

ON (((pg_attribute.attrelid = ind_atts.indexrelid) AND (pg_attribute.attnum = ind_atts.attnum))))

JOIN pg_stats ON (((pg_stats.schemaname = ind_atts.nspname) AND (((pg_stats.tablename = ind_atts.tablename) AND

((pg_stats.attname) :: TEXT =

pg_get_indexdef(pg_attribute.attrelid,

(pg_attribute.attnum) :: INTEGER,

TRUE))) OR

((pg_stats.tablename = ind_atts.index_name) AND

(pg_stats.attname = pg_attribute.attname))))))

WHERE (pg_attribute.attnum > 0)

GROUP BY ind_atts.nspname, ind_atts.index_name, ind_atts.reltuples, ind_atts.relpages, ind_atts.relam,

ind_atts.indrelid, ind_atts.index_oid, (current_setting('block_size' :: TEXT)) :: NUMERIC, 8 :: INTEGER

), index_aligned_est AS (

SELECT

index_item_sizes.maxalign,

index_item_sizes.bs,

index_item_sizes.nspname,

index_item_sizes.index_name,

index_item_sizes.reltuples,

index_item_sizes.relpages,

index_item_sizes.relam,

index_item_sizes.table_oid,

index_item_sizes.index_oid,

COALESCE(ceil((((index_item_sizes.reltuples * ((((((((6 + index_item_sizes.maxalign) -

CASE

WHEN ((index_item_sizes.index_tuple_hdr %

index_item_sizes.maxalign) = 0)

THEN index_item_sizes.maxalign

ELSE (index_item_sizes.index_tuple_hdr %

index_item_sizes.maxalign)

END)) :: FLOAT + index_item_sizes.nulldatawidth)

+ (index_item_sizes.maxalign) :: FLOAT) - (

CASE

WHEN (((index_item_sizes.nulldatawidth) :: INTEGER %

index_item_sizes.maxalign) = 0)

THEN index_item_sizes.maxalign

ELSE ((index_item_sizes.nulldatawidth) :: INTEGER %

index_item_sizes.maxalign)

END) :: FLOAT)) :: NUMERIC) :: FLOAT) /

((index_item_sizes.bs - (index_item_sizes.pagehdr) :: NUMERIC)) :: FLOAT) +

(1) :: FLOAT)), (0) :: FLOAT) AS expected

FROM index_item_sizes

), raw_bloat AS (

SELECT

current_database() AS dbname,

index_aligned_est.nspname,

pg_class.relname AS table_name,

index_aligned_est.index_name,

(index_aligned_est.bs * ((index_aligned_est.relpages) :: BIGINT) :: NUMERIC) AS totalbytes,

index_aligned_est.expected,

CASE

WHEN ((index_aligned_est.relpages) :: FLOAT <= index_aligned_est.expected)

THEN (0) :: NUMERIC

ELSE (index_aligned_est.bs *

((((index_aligned_est.relpages) :: FLOAT - index_aligned_est.expected)) :: BIGINT) :: NUMERIC)

END AS wastedbytes,

CASE

WHEN ((index_aligned_est.relpages) :: FLOAT <= index_aligned_est.expected)

THEN (0) :: NUMERIC

ELSE (((index_aligned_est.bs * ((((index_aligned_est.relpages) :: FLOAT -

index_aligned_est.expected)) :: BIGINT) :: NUMERIC) * (100) :: NUMERIC) /

(index_aligned_est.bs * ((index_aligned_est.relpages) :: BIGINT) :: NUMERIC))

END AS realbloat,

pg_relation_size((index_aligned_est.table_oid) :: REGCLASS) AS table_bytes,

stat.idx_scan AS index_scans

FROM ((index_aligned_est

JOIN pg_class ON ((pg_class.oid = index_aligned_est.table_oid)))

JOIN pg_stat_user_indexes stat ON ((index_aligned_est.index_oid = stat.indexrelid)))

), format_bloat AS (

SELECT

raw_bloat.dbname AS database_name,

raw_bloat.nspname AS schema_name,

raw_bloat.table_name,

raw_bloat.index_name,

round(

raw_bloat.realbloat) AS bloat_pct,

round((raw_bloat.wastedbytes / (((1024) :: FLOAT ^

(2) :: FLOAT)) :: NUMERIC)) AS bloat_mb,

round((raw_bloat.totalbytes / (((1024) :: FLOAT ^ (2) :: FLOAT)) :: NUMERIC),

3) AS index_mb,

round(

((raw_bloat.table_bytes) :: NUMERIC / (((1024) :: FLOAT ^ (2) :: FLOAT)) :: NUMERIC),

3) AS table_mb,

raw_bloat.index_scans

FROM raw_bloat

)

SELECT

format_bloat.database_name as datname,

format_bloat.schema_name as nspname,

format_bloat.table_name as relname,

format_bloat.index_name as idxname,

format_bloat.index_scans as idx_scans,

format_bloat.bloat_pct as bloat_pct,

format_bloat.table_mb,

format_bloat.index_mb - format_bloat.bloat_mb as actual_mb,

format_bloat.bloat_mb,

format_bloat.index_mb as total_mb

FROM format_bloat

ORDER BY format_bloat.bloat_mb DESC;

COMMENT ON VIEW monitor.pg_bloat_indexes IS 'index bloat monitor';

表膨胀的处理

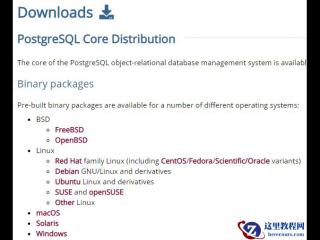

VACUUM FULL就可以了。但对于需要不间断运行的数据库,我们就需要用到

pg_repack来处理表的膨胀。pg_repack已经包含在了PostgreSQL官方的yum源中,因此可以直接通过

yum install pg_repack安装。yum install pg_repack10

pg_repack的使用

pg_repack也通过类似的参数连接至PostgreSQL服务器。pg_repack之前,需要在待重整的数据库中创建

pg_repack扩展CREATE EXTENSION pg_repack

# 完全清理整个数据库,开5个并发任务,超时等待10秒 pg_repack -d <database> -j 5 -T 10 # 清理mydb中一张特定的表mytable,超时等待10秒 pg_repack mydb -t public.mytable -T 10 # 清理某个特定的索引 myschema.myindex,注意必须使用带模式的全名 pg_repack mydb -i myschema.myindex

pg_repack的策略

#--------------------------------------------------------------#

# Name: repack_tables

# Desc: repack table via fullname

# Arg1: database_name

# Argv: list of table full name

# Deps: psql

#--------------------------------------------------------------#

# repack single table

function repack_tables(){

local db=$1

shift

log_info "repack ${db} tables begin"

log_info "repack table list: $@"

for relname in $@

do

old_size=$(psql ${db} -Atqc "SELECT pg_size_pretty(pg_relation_size('${relname}'));")

# kill_queries ${db}

log_info "repack table ${relname} begin, old size: ${old_size}"

pg_repack ${db} -T 10 -t ${relname}

new_size=$(psql ${db} -Atqc "SELECT pg_size_pretty(pg_relation_size('${relname}'));")

log_info "repack table ${relname} done , new size: ${old_size} -> ${new_size}"

done

log_info "repack ${db} tables done"

}

#--------------------------------------------------------------#

# Name: get_bloat_tables

# Desc: find bloat tables in given database match some condition

# Arg1: database_name

# Echo: list of full table name

# Deps: psql, monitor.pg_bloat_tables

#--------------------------------------------------------------#

function get_bloat_tables(){

echo $(psql ${1} -Atq <<-'EOF'

WITH bloat_tables AS (

SELECT

nspname || '.' || relname as relname,

actual_mb,

bloat_pct

FROM monitor.pg_bloat_tables

WHERE nspname NOT IN ('dba', 'monitor', 'trash')

ORDER BY 2 DESC,3 DESC

)

-- 64 small + 16 medium + 4 large

(SELECT relname FROM bloat_tables WHERE actual_mb < 256 AND bloat_pct > 40 ORDER BY bloat_pct DESC LIMIT 64) UNION

(SELECT relname FROM bloat_tables WHERE actual_mb BETWEEN 256 AND 1024 AND bloat_pct > 30 ORDER BY bloat_pct DESC LIMIT 16) UNION

(SELECT relname FROM bloat_tables WHERE actual_mb BETWEEN 1024 AND 4096 AND bloat_pct > 20 ORDER BY bloat_pct DESC LIMIT 4);

EOF

)

}

pg_repack的原理

pg_repack的原理相当简单,它会为待重建的表创建一份副本。首先取一份全量快照,将所有活元组写入新表,并通过触发器将所有针对原表的变更同步至新表,最后通过重命名,使用新的紧实副本替换老表。而对于索引,则是通过PostgreSQL的

CREATE(DROP) INDEX CONCURRENTLY完成的。-

创建一张原始表的相应日志表。 -

为原始表添加行触发器,在相应日志表中记录所有 INSERT,DELETE,UPDATE操作。 -

创建一张包含老表所有行的表。 -

在新表上创建同样的索引 -

将日志表中的增量变更应用到新表上 -

使用系统目录切换表,相关索引,相关Toast表。

-

使用 CREATE INDEX CONCURRENTLY在原表上创建新索引,保持与旧索引相同的定义。 -

在数据目录中将新旧索引交换。 -

删除旧索引。

pg_repack的注意事项

${schema_name}.table_${table_oid}${schema_name}.index_${table_oid}}文章来源于非法加冯 ,作者Vonng