一 voting disk 坏一个

1破坏磁盘

[root@db1 grid]# dd if=/dev/zero of=/dev/asm-diskb count=500 bs=10M

[grid@db1 ~]$ crsctl query css votedisk

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. PENDOFFL 8a1d4fc8a71e4f98bffd89ced976f05f (/dev/asm-diskc) [OCR]

2. ONLINE 720e2a0144384f5dbf3dcc3b1de5e5a4 (/dev/asm-diskd) [OCR]

3. ONLINE 163de21caef14fdcbf93110a1638f30e (/dev/asm-diskb) [OCR]

停集群crsctl stop cluster -all

单个节点启动

crsctl stat crs

2 验证[root@db1 grid]# crsctl check crs

CRS-4638: Oracle High Availability Services is online

CRS-4535: Cannot communicate with Cluster Ready Services

CRS-4529: Cluster Synchronization Services is online

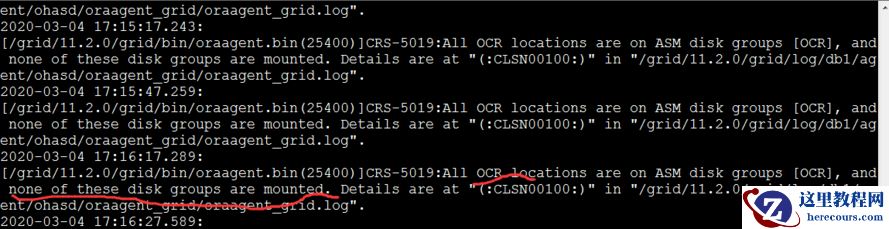

CRS-4534: Cannot communicate with Event Managercrs没有启动成功,查看日志

3 mount上磁盘SQL> select NAME,STATE from v$asm_diskgroup;

NAME STATE

------------------------------ -----------

ARCH MOUNTED

DATA MOUNTED

OCR DISMOUNTED

SQL> alter diskgroup ocr mount;

alter diskgroup ocr mount

*

ERROR at line 1:

ORA-15032: not all alterations performed

ORA-15040: diskgroup is incomplete

ORA-15042: ASM disk "0" is missing from group number "3"

SQL> alter diskgroup ocr mount force;

Diskgroup altered.

SQL> select NAME,STATE from v$asm_diskgroup;

NAME STATE

------------------------------ -----------

ARCH MOUNTED

DATA MOUNTED

OCR MOUNTED

4 停止,再启动[root@db1 grid]# crsctl stop crs -f[root@db1 grid]# crsctl start crs

[root@db1 grid]# crsctl check crs --等一等,需要时间,

CRS-4638: Oracle High Availability Services is online

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

5 添加sdb盘到ocr磁盘组[grid@db1 ~]$ asmca图形界面添加不上,。。。。。。没办法只能命令添加

[grid@db1 ~]$ sqlplus / as sysasmSQL> col path for a20SQL> select group_number,disk_number ,path from v$asm_disk;GROUP_NUMBER DISK_NUMBER PATH

------------ ----------- --------------------

0 0 /dev/asm-diskb

3 0

2 1 /dev/asm-diskf

2 0 /dev/asm-diske

3 2 /dev/asm-diskd

3 1 /dev/asm-diskc

1 1 /dev/asm-diskh

1 0 /dev/asm-diskg

SQL> alter diskgroup ocr add disk '/dev/asm-diskb';

SQL> select group_number,disk_number ,path from v$asm_disk;GROUP_NUMBER DISK_NUMBER PATH

------------ ----------- --------------------

3 0

3 3 /dev/asm-diskb

2 1 /dev/asm-diskf

2 0 /dev/asm-diske

3 2 /dev/asm-diskd

3 1 /dev/asm-diskc

1 1 /dev/asm-diskh

1 0 /dev/asm-diskg

6 验证[grid@db1 ~]$ crsctl query css votedisk

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. ONLINE 8a1d4fc8a71e4f98bffd89ced976f05f (/dev/asm-diskc) [OCR]

2. ONLINE 720e2a0144384f5dbf3dcc3b1de5e5a4 (/dev/asm-diskd) [OCR]

3. ONLINE 163de21caef14fdcbf93110a1638f30e (/dev/asm-diskb) [OCR]

Located 3 voting disk(s).

[grid@db1 ~]$ asmcmd

ASMCMD> lsop

Group_Name Dsk_Num State Power EST_WORK EST_RATE EST_TIME

[root@db1 grid]# crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE ONLINE db1

ora.gsd

OFFLINE OFFLINE db1

ora.net1.network

ONLINE ONLINE db1

ora.ons

ONLINE ONLINE db1

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.LISTENER_SCAN1.lsnr

1 ONLINE ONLINE db1

ora.db1.vip

1 ONLINE ONLINE db1

ora.db2.vip

1 ONLINE INTERMEDIATE db1 FAILED OVER

ora.orcl.db

1 ONLINE ONLINE db1 Open

2 ONLINE OFFLINE

ora.scan1.vip

1 ONLINE ONLINE db1

7 节点2 也需要开启[root@db2 grid]# crsctl start crs

CRS-4640: Oracle High Availability Services is already active

CRS-4000: Command Start failed, or completed with errors.

[root@db2 grid]# crsctl stop crs -f

[root@db2 grid]# crsctl start crs[root@db2 grid]# crsctl check crs --等一会

CRS-4638: Oracle High Availability Services is online

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

[root@db2 grid]# crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.gsd

OFFLINE OFFLINE db1

OFFLINE OFFLINE db2

ora.net1.network

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.ons

ONLINE ONLINE db1

ONLINE ONLINE db2

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.LISTENER_SCAN1.lsnr

1 ONLINE ONLINE db1

ora.db1.vip

1 ONLINE ONLINE db1

ora.db2.vip

1 ONLINE ONLINE db2

ora.orcl.db

1 ONLINE ONLINE db1 Open

2 ONLINE ONLINE db2 Open

ora.scan1.vip

1 ONLINE ONLINE db1

3 mount上磁盘SQL> select NAME,STATE from v$asm_diskgroup;

NAME STATE

------------------------------ -----------

ARCH MOUNTED

DATA MOUNTED

OCR DISMOUNTED

SQL> alter diskgroup ocr mount;

alter diskgroup ocr mount

*

ERROR at line 1:

ORA-15032: not all alterations performed

ORA-15040: diskgroup is incomplete

ORA-15042: ASM disk "0" is missing from group number "3"

SQL> alter diskgroup ocr mount force;

Diskgroup altered.

SQL> select NAME,STATE from v$asm_diskgroup;

NAME STATE

------------------------------ -----------

ARCH MOUNTED

DATA MOUNTED

OCR MOUNTED

4 停止,再启动[root@db1 grid]# crsctl stop crs -f[root@db1 grid]# crsctl start crs

[root@db1 grid]# crsctl check crs --等一等,需要时间,

CRS-4638: Oracle High Availability Services is online

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

5 添加sdb盘到ocr磁盘组[grid@db1 ~]$ asmca图形界面添加不上,。。。。。。没办法只能命令添加

[grid@db1 ~]$ sqlplus / as sysasmSQL> col path for a20SQL> select group_number,disk_number ,path from v$asm_disk;GROUP_NUMBER DISK_NUMBER PATH

------------ ----------- --------------------

0 0 /dev/asm-diskb

3 0

2 1 /dev/asm-diskf

2 0 /dev/asm-diske

3 2 /dev/asm-diskd

3 1 /dev/asm-diskc

1 1 /dev/asm-diskh

1 0 /dev/asm-diskg

SQL> alter diskgroup ocr add disk '/dev/asm-diskb';

SQL> select group_number,disk_number ,path from v$asm_disk;GROUP_NUMBER DISK_NUMBER PATH

------------ ----------- --------------------

3 0

3 3 /dev/asm-diskb

2 1 /dev/asm-diskf

2 0 /dev/asm-diske

3 2 /dev/asm-diskd

3 1 /dev/asm-diskc

1 1 /dev/asm-diskh

1 0 /dev/asm-diskg

6 验证[grid@db1 ~]$ crsctl query css votedisk

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. ONLINE 8a1d4fc8a71e4f98bffd89ced976f05f (/dev/asm-diskc) [OCR]

2. ONLINE 720e2a0144384f5dbf3dcc3b1de5e5a4 (/dev/asm-diskd) [OCR]

3. ONLINE 163de21caef14fdcbf93110a1638f30e (/dev/asm-diskb) [OCR]

Located 3 voting disk(s).

[grid@db1 ~]$ asmcmd

ASMCMD> lsop

Group_Name Dsk_Num State Power EST_WORK EST_RATE EST_TIME

[root@db1 grid]# crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE ONLINE db1

ora.gsd

OFFLINE OFFLINE db1

ora.net1.network

ONLINE ONLINE db1

ora.ons

ONLINE ONLINE db1

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.LISTENER_SCAN1.lsnr

1 ONLINE ONLINE db1

ora.db1.vip

1 ONLINE ONLINE db1

ora.db2.vip

1 ONLINE INTERMEDIATE db1 FAILED OVER

ora.orcl.db

1 ONLINE ONLINE db1 Open

2 ONLINE OFFLINE

ora.scan1.vip

1 ONLINE ONLINE db1

7 节点2 也需要开启[root@db2 grid]# crsctl start crs

CRS-4640: Oracle High Availability Services is already active

CRS-4000: Command Start failed, or completed with errors.

[root@db2 grid]# crsctl stop crs -f

[root@db2 grid]# crsctl start crs[root@db2 grid]# crsctl check crs --等一会

CRS-4638: Oracle High Availability Services is online

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

[root@db2 grid]# crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.gsd

OFFLINE OFFLINE db1

OFFLINE OFFLINE db2

ora.net1.network

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.ons

ONLINE ONLINE db1

ONLINE ONLINE db2

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.LISTENER_SCAN1.lsnr

1 ONLINE ONLINE db1

ora.db1.vip

1 ONLINE ONLINE db1

ora.db2.vip

1 ONLINE ONLINE db2

ora.orcl.db

1 ONLINE ONLINE db1 Open

2 ONLINE ONLINE db2 Open

ora.scan1.vip

1 ONLINE ONLINE db1

二 破坏两个voting disk

1 备份[root@db1 grid]# ocrconfig -manualbackup db1 2020/03/04 19:38:35 /grid/11.2.0/grid/cdata/db-cluster/backup_20200304_193835.ocr [root@db1 grid]# ocrconfig -showbackup db2 2020/03/04 12:49:04 /grid/11.2.0/grid/cdata/db-cluster/backup00.ocr db2 2020/03/03 08:08:58 /grid/11.2.0/grid/cdata/db-cluster/backup01.ocr db2 2020/03/03 04:08:56 /grid/11.2.0/grid/cdata/db-cluster/backup02.ocr db2 2020/03/03 04:08:56 /grid/11.2.0/grid/cdata/db-cluster/day.ocr db2 2020/03/03 04:08:56 /grid/11.2.0/grid/cdata/db-cluster/week.ocr db1 2020/03/04 19:38:35 /grid/11.2.0/grid/cdata/db-cluster/backup_20200304_193835.ocr 2 破坏磁盘[grid@db1 ~]$ crsctl query css votedisk ## STATE File Universal Id File Name Disk group -- ----- ----------------- --------- --------- 1. ONLINE 8a1d4fc8a71e4f98bffd89ced976f05f (/dev/asm-diskc) [OCR] 2. ONLINE 720e2a0144384f5dbf3dcc3b1de5e5a4 (/dev/asm-diskd) [OCR] 3. ONLINE 163de21caef14fdcbf93110a1638f30e (/dev/asm-diskb) [OCR] [root@db1 grid]# dd if=/dev/zero of=/dev/asm-diskb count=500 bs=10M dd: writing `/dev/asm-diskb': No space left on device 103+0 records in 102+0 records out 1073741824 bytes (1.1 GB) copied, 31.3009 s, 34.3 MB/s [root@db1 grid]# dd if=/dev/zero of=/dev/asm-diskc count=500 bs=10M dd: writing `/dev/asm-diskc': No space left on device 103+0 records in 102+0 records out 追踪日志: [grid@db1 db1]$ tail -f alertdb1.log 2020-03-04 19:45:45.893: [cssd(14265)]CRS-1614:No I/O has completed after 75% of the maximum interval. Voting file /dev/asm-diskb will be considered not functional in 49950 milliseconds 2020-03-04 19:46:15.952: [cssd(14265)]CRS-1613:No I/O has completed after 90% of the maximum interval. Voting file /dev/asm-diskb will be considered not functional in 19900 milliseconds 2020-03-04 19:46:28.962: [cssd(14265)]CRS-1615:No I/O has completed after 50% of the maximum interval. Voting file /dev/asm-diskc will be considered not functional in 99150 milliseconds 2020-03-04 19:46:35.963: [cssd(14265)]CRS-1604:CSSD voting file is offline: /dev/asm-diskb; details at (:CSSNM00058:) in /grid/11.2.0/grid/log/db1/cssd/ocssd.log. 2020-03-04 19:47:18.992: [cssd(14265)]CRS-1614:No I/O has completed after 75% of the maximum interval. Voting file /dev/asm-diskc will be considered not functional in 49130 milliseconds 。。。[cssd(22611)]CRS-1705:Found 1 configured voting files but 2 voting files are required, terminating to ensure data integrity; details at (:CSSNM00021:) in /grid/11.2.0/grid/log/db1/cssd/ocssd.log 2020-03-04 19:48:49.994: [cssd(22611)]CRS-1656:The CSS daemon is terminating due to a fatal error; Details at (:CSSSC00012:) in /grid/11.2.0/grid/log/db1/cssd/ocssd.log 2020-03-04 19:48:50.008: [cssd(22611)]CRS-1603:CSSD on node db1 shutdown by user. 2020-03-04 19:48:53.186: [ohasd(14019)]CRS-2765:Resource 'ora.crsd' has failed on server 'db1' --查看 crs ,也不正常。 [root@db1 grid]# crsctl check crs CRS-4638: Oracle High Availability Services is online CRS-4535: Cannot communicate with Cluster Ready Services CRS-4530: Communications failure contacting Cluster Synchronization Services daemon CRS-4534: Cannot communicate with Event Manager磁盘组信息已经查不到了 [root@db1 grid]# crsctl query css votedisk Unable to communicate with the Cluster Synchronization Services daemon. 3 停集群[root@db1 grid]# crsctl stop crs -f [root@db1 grid]# crsctl start crs CRS-4123: Oracle High Availability Services has been started. [root@db1 grid]# crsctl check crs CRS-4638: Oracle High Availability Services is online CRS-4535: Cannot communicate with Cluster Ready Services CRS-4530: Communications failure contacting Cluster Synchronization Services daemon CRS-4534: Cannot communicate with Event Manager crs、css、都起不来,说明ocr和votedisk都有问题。只能停掉,从新启动到独占模式,独占模式跳过voting disk,加上参数-nocrs 跳过ocr [root@db1 grid]# crsctl stop has -f[root@db1 grid]# crsctl start crs -excl -nocrs [root@db1 grid]# crsctl check crs CRS-4638: Oracle High Availability Services is online CRS-4535: Cannot communicate with Cluster Ready Services CRS-4529: Cluster Synchronization Services is online CRS-4534: Cannot communicate with Event Manager 4 查看磁盘组情况SQL> select NAME,STATE from v$asm_diskgroup; NAME STATE ------------------------------ ----------- DATA DISMOUNTED OCR DISMOUNTED ARCH DISMOUNTED 强制mount data和 ocrSQL> alter diskgroup ocr mount force; alter diskgroup ocr mount force * ERROR at line 1: ORA-15032: not all alterations performed ORA-15017: diskgroup "OCR" cannot be mounted ORA-15063: ASM discovered an insufficient number of disks for diskgroup "OCR" SQL> alter diskgroup data mount force; Diskgroup altered. SQL> alter diskgroup arch mount force; Diskgroup altered. SQL> select NAME,STATE from v$asm_diskgroup; NAME STATE ------------------------------ ----------- DATA MOUNTED OCR DISMOUNTED ARCH MOUNTED [root@db1 grid]# crsctl query css votedisk ## STATE File Universal Id File Name Disk group -- ----- ----------------- --------- --------- 1. OFFLINE 8a1d4fc8a71e4f98bffd89ced976f05f () [] 2. ONLINE 720e2a0144384f5dbf3dcc3b1de5e5a4 (/dev/asm-diskd) [OCR] 3. OFFLINE 163de21caef14fdcbf93110a1638f30e () [] 没办法,orc不能mount,只能删除掉ocr磁盘组,从新建立ocr,通过备份恢复先恢复ocr,在恢复voting disk [root@db1 grid]# dd if=/dev/zero of=/dev/asm-diskd count=500 bs=10M 只剩下两个磁盘组了SQL> select NAME,STATE from v$asm_diskgroup; NAME STATE ------------------------------ ----------- DATA MOUNTED ARCH MOUNTED 5 创建磁盘组 Diskgroup created. SQL> select NAME,STATE from v$asm_diskgroup; NAME STATE ------------------------------ ----------- DATA MOUNTED OCR MOUNTED ARCH MOUNTED 6 恢复ocr--先查看备份[root@db1 grid]# ocrconfig -showbackup PROT-26: Oracle Cluster Registry backup locations were retrieved from a local copy db2 2020/03/04 12:49:04 /grid/11.2.0/grid/cdata/db-cluster/backup00.ocr db2 2020/03/03 08:08:58 /grid/11.2.0/grid/cdata/db-cluster/backup01.ocr db2 2020/03/03 04:08:56 /grid/11.2.0/grid/cdata/db-cluster/backup02.ocr db2 2020/03/03 04:08:56 /grid/11.2.0/grid/cdata/db-cluster/day.ocr db2 2020/03/03 04:08:56 /grid/11.2.0/grid/cdata/db-cluster/week.ocr 发现没有我刚才备份新的文件包了 /grid/11.2.0/grid/cdata/db-cluster/backup_20200304_193835.ocr,只能用最新的00.ocr恢复了,可能原因是ocr磁盘组坏的原因? ,当时备份的时候应该指定目录。后来查看资料是不支持自动备份,可以用export备份 --恢复[root@db1 grid]# ocrconfig -restore backup00.ocr PROT-18: Failed to open the specified backup file 'backup00.ocr' 可能是权限的问题 [root@db2 grid]# cd /grid/11.2.0/grid/cdata/db-cluster [root@db2 db-cluster]# ll total 60428 -rw------- 1 root root 6541312 Mar 4 12:49 backup00.ocr -rw------- 1 root root 6541312 Mar 3 08:08 backup01.ocr -rw------- 1 root root 6541312 Mar 3 04:08 backup02.ocr -rw------- 1 root root 7430144 Feb 28 23:37 backup_20200228_233707.ocr -rw------- 1 root root 7573504 Feb 29 15:10 backup_20200229_151048.ocr -rw------- 1 root root 7626752 Mar 2 07:15 backup_20200302_071510.ocr -rw------- 1 root root 6541312 Mar 4 12:49 day_.ocr -rw------- 1 root root 6541312 Mar 3 04:08 day.ocr -rw------- 1 root root 6541312 Mar 3 04:08 week.ocr [root@db2 db-cluster]# chmod 777 backup00.ocr[root@db2 db-cluster]# chmod grid:oinstall backup00.ocr [root@db1 grid]# ocrconfig -restore backup00.ocr PROT-18: Failed to open the specified backup file 'backup00.ocr'还是不行,后来百度,原来是需要设置参数 7 设置参数 SQL> show parameter asm NAME TYPE VALUE ------------------------------------ ----------- ------------------------------ asm_diskgroups string DATA, ARCH, OCR asm_diskstring string asm_power_limit integer 1 asm_preferred_read_failure_groups string SQL> alter system set asm_diskstring='/dev/asm-*'; System altered. 恢复,还是同样的错误[root@db1 grid]# ocrconfig -restore backup00.ocr PROT-18: Failed to open the specified backup file 'backup00.ocr' [root@db1 grid]# crsctl check crs CRS-4638: Oracle High Availability Services is online CRS-4535: Cannot communicate with Cluster Ready Services CRS-4529: Cluster Synchronization Services is online CRS-4534: Cannot communicate with Event Manager 停止crs,从新启动到独占模式[root@db1 grid]# crsctl stop crs -f[root@db1 grid]# crsctl start crs -excl -nocrs 恢复 [root@db1 grid]# ocrconfig -restore /grid/11.2.0/grid/cdata/db-cluster/backup00.ocr 无情~~~~~折腾半天,原来需要写全路径

[root@db1 grid]# ocrcheck Status of Oracle Cluster Registry is as follows : Version : 3 Total space (kbytes) : 262120 Used space (kbytes) : 3220 Available space (kbytes) : 258900 ID : 1307910303 Device/File Name : +ocr Device/File integrity check succeeded Device/File not configured Device/File not configured Device/File not configured Device/File not configured Cluster registry integrity check succeededLogical corruption check succeeded 8 恢复表决磁盘[root@db1 grid]# crsctl replace votedisk +OCR Successful addition of voting disk 7826ce42a03a4f8ebf19302a0d803826. Successful addition of voting disk 0cff00884f1b4f79bf11b9b24e632a31. Successful addition of voting disk 956b72351cc24f73bfec7ea1d78ce2c8. Successfully replaced voting disk group with +OCR. CRS-4266: Voting file(s) successfully replaced--验证 [root@db1 grid]# crsctl check crs CRS-4638: Oracle High Availability Services is online CRS-4535: Cannot communicate with Cluster Ready Services CRS-4529: Cluster Synchronization Services is online CRS-4534: Cannot communicate with Event Manager--查看ocr磁盘组 [root@db1 grid]# crsctl query css votedisk ## STATE File Universal Id File Name Disk group -- ----- ----------------- --------- --------- 1. ONLINE 7826ce42a03a4f8ebf19302a0d803826 (/dev/asm-diskb) [OCR] 2. ONLINE 0cff00884f1b4f79bf11b9b24e632a31 (/dev/asm-diskc) [OCR] 3. ONLINE 956b72351cc24f73bfec7ea1d78ce2c8 (/dev/asm-diskd) [OCR] Located 3 voting disk(s). 9 由于上面用独占模式启动,现在关闭再启动crs [root@db1 grid]# crsctl stop crs CRS-2791: Starting shutdown of Oracle High Availability Services-managed resources on 'db1' CRS-2673: Attempting to stop 'ora.mdnsd' on 'db1' CRS-2673: Attempting to stop 'ora.drivers.acfs' on 'db1' CRS-2677: Stop of 'ora.drivers.acfs' on 'db1' succeeded CRS-2677: Stop of 'ora.mdnsd' on 'db1' succeeded CRS-2673: Attempting to stop 'ora.cssd' on 'db1' CRS-2677: Stop of 'ora.cssd' on 'db1' succeeded CRS-2673: Attempting to stop 'ora.gipcd' on 'db1' CRS-2677: Stop of 'ora.gipcd' on 'db1' succeeded CRS-2673: Attempting to stop 'ora.gpnpd' on 'db1' CRS-2677: Stop of 'ora.gpnpd' on 'db1' succeeded CRS-2793: Shutdown of Oracle High Availability Services-managed resources on 'db1' has completed CRS-4133: Oracle High Availability Services has been stopped. [root@db1 grid]# crsctl start crs CRS-4123: Oracle High Availability Services has been started. [root@db1 grid]# crsctl stop has -f CRS-2791: Starting shutdown of Oracle High Availability Services-managed resources on 'db1' CRS-2673: Attempting to stop 'ora.mdnsd' on 'db1' CRS-2673: Attempting to stop 'ora.cssd' on 'db1' CRS-2677: Stop of 'ora.cssd' on 'db1' succeeded CRS-2673: Attempting to stop 'ora.crf' on 'db1' CRS-2677: Stop of 'ora.mdnsd' on 'db1' succeeded CRS-2677: Stop of 'ora.crf' on 'db1' succeeded CRS-2673: Attempting to stop 'ora.gipcd' on 'db1' CRS-2677: Stop of 'ora.gipcd' on 'db1' succeeded CRS-2673: Attempting to stop 'ora.gpnpd' on 'db1' CRS-2677: Stop of 'ora.gpnpd' on 'db1' succeeded CRS-2793: Shutdown of Oracle High Availability Services-managed resources on 'db1' has completed CRS-4133: Oracle High Availability Services has been stopped. [root@db1 grid]# crsctl start crs CRS-4123: Oracle High Availability Services has been started. 10 验证[root@db1 grid]# crsctl stat res -t -------------------------------------------------------------------------------- NAME TARGET STATE SERVER STATE_DETAILS -------------------------------------------------------------------------------- Local Resources -------------------------------------------------------------------------------- ora.ARCH.dg ONLINE ONLINE db1 ONLINE ONLINE db2 ora.DATA.dg ONLINE ONLINE db1 ONLINE ONLINE db2 ora.LISTENER.lsnr ONLINE ONLINE db1 ONLINE ONLINE db2 ora.OCR.dg ONLINE ONLINE db1 ONLINE ONLINE db2 ora.asm ONLINE ONLINE db1 Started ONLINE ONLINE db2 Started ora.gsd OFFLINE OFFLINE db1 OFFLINE OFFLINE db2 ora.net1.network ONLINE ONLINE db1 ONLINE ONLINE db2 ora.ons ONLINE ONLINE db1 ONLINE ONLINE db2 ora.registry.acfs ONLINE ONLINE db1 ONLINE ONLINE db2 -------------------------------------------------------------------------------- Cluster Resources -------------------------------------------------------------------------------- ora.LISTENER_SCAN1.lsnr 1 ONLINE ONLINE db2 ora.cvu 1 ONLINE ONLINE db2 ora.db1.vip 1 ONLINE ONLINE db1 ora.db2.vip 1 ONLINE ONLINE db2 ora.oc4j 1 ONLINE ONLINE db2 ora.orcl.db 1 ONLINE ONLINE db1 Open 2 ONLINE ONLINE db2 Open ora.scan1.vip 1 ONLINE ONLINE db2 节点2:验证[root@db2 grid]# crsctl stat res -t 完成 总结:1 备份ocrconfig -export /grid/ocr.exp 2 破坏磁盘3 停集群crsctl stop cluster -all -fcrsctl stop crs -f4 启动crs独占模式 crsctl start crs -excl -nocrs5 创建ocr磁盘组并mountSQL> create diskgroup ocr normal redundancy disk '/dev/asm-diskb','/dev/asm-diskc','/dev/asm-diskd' attribute 'au_size'='1M','compatible.asm' = '11.2.0','compatible.rdbms' = '11.2.0';SQL> alter diskgroup data mount force;6 设置参数SQL> show parameter asmSQL> alter system set asm_diskstring='/dev/asm-*';7 恢复ocr[root@db1 grid]# ocrconfig -restore /grid/backup_20200305_120240.ocr8 恢复voting disk [root@db1 grid]# crsctl replace votedisk +OCR9 停集群(上面启动的是独占)[root@db1 grid]# crsctl stop crs -f 10 启动crs[root@db1 grid]# crsctl start crs11 验证[root@db1 grid]# crsctl stat res -t 参考案例 http://blog.itpub.net/25462274/viewspace-2148847/ Voting Disk 这个文件主要用于记录节点成员状态,在出现脑裂时,决定那个Partion获得控制权,其他的Partion必须从集群中剔除。Voting disk使用的是一种“多数可用算法”,如果有多个Voting disk,则必须一半以上的Votedisk同时使用,Clusterware才能正常使用。 比如配置了3个Votedisk,坏一个Votedisk,集群可以正常工作,如果坏了2个,则不能满足半数以上,集群会立即宕掉,

三 无备份恢复ocr

1

为了安全,还是备份一下[root@db1 grid]# ocrconfig -export /grid/back.ocr

2 破换磁盘[root@db1 grid]# crsctl query css votedisk

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. ONLINE 7826ce42a03a4f8ebf19302a0d803826 (/dev/asm-diskb) [OCR]

2. ONLINE 0cff00884f1b4f79bf11b9b24e632a31 (/dev/asm-diskc) [OCR]

3. ONLINE 956b72351cc24f73bfec7ea1d78ce2c8 (/dev/asm-diskd) [OCR][root@db1 grid]# dd if=/dev/zero of=/dev/asm-diskb count=500 bs=10M[root@db1 grid]# dd if=/dev/zero of=/dev/asm-diskc count=500 bs=10M

[root@db1 grid]# dd if=/dev/zero of=/dev/asm-diskd count=500 bs=10M

[root@db1 grid]# crsctl query css votedisk

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. OFFLINE 7826ce42a03a4f8ebf19302a0d803826 (/dev/asm-diskb) [OCR]

2. PENDOFFL 0cff00884f1b4f79bf11b9b24e632a31 (/dev/asm-diskc) [OCR]

3. PENDOFFL 956b72351cc24f73bfec7ea1d78ce2c8 (/dev/asm-diskd) [OCR]

[root@db1 grid]# crsctl check crs

CRS-4638: Oracle High Availability Services is online

CRS-4535: Cannot communicate with Cluster Ready Services

CRS-4530: Communications failure contacting Cluster Synchronization Services daemon

CRS-4534: Cannot communicate with Event Managercrs 和 ocr磁盘组都不正常

3 停止crs ,启动到独占模式,跳过ocr和表决磁盘

[root@db1 grid]# crsctl stop crs -f[root@db1 grid]# crsctl start crs -excl -nocrs

4 查看磁盘情况--节点1

SQL> select group_number,name,state,type from v$asm_diskgroup;

GROUP_NUMBER NAME STATE TYPE

------------ ------------------------------ ----------- ------

0 DATA DISMOUNTED

0 ARCH DISMOUNTED

创建磁盘组SQL> create diskgroup ocr normal redundancy disk '/dev/asm-diskb','/dev/asm-diskc','/dev/asm-diskd' attribute 'au_size'='1M','compatible.asm' = '11.2.0','compatible.rdbms' = '11.2.0';

mount并验证SQL> alter diskgroup DATA mount;

Diskgroup altered.

SQL> alter diskgroup ARCH mount;

Diskgroup altered.

SQL> select group_number,name,state,type from v$asm_diskgroup;

GROUP_NUMBER NAME STATE TYPE

------------ ------------------------------ ----------- ------

2 DATA MOUNTED EXTERN

3 ARCH MOUNTED EXTERN

1 OCR MOUNTED NORMAL

--节点2[root@db2 grid]# crsctl stop has -f[root@db2 grid]# crsctl start crs -excl -nocrsSQL> select group_number,name,state,type from v$asm_diskgroup;

GROUP_NUMBER NAME STATE TYPE

------------ ------------------------------ ----------- ------

1 OCR MOUNTED NORMAL

0 DATA DISMOUNTED

0 ARCH DISMOUNTED

SQL> alter diskgroup DATA mount;

Diskgroup altered.

SQL> alter diskgroup arch mount;

Diskgroup altered.

SQL> select group_number,name,state,type from v$asm_diskgroup;

GROUP_NUMBER NAME STATE TYPE

------------ ------------------------------ ----------- ------

1 OCR MOUNTED NORMAL

2 DATA MOUNTED EXTERN

3 ARCH MOUNTED EXTERN

5 停集群crs[root@db1 grid]# crsctl stop crs -f[root@db2 grid]# crsctl stop crs -f

6 清除clster信息--节点1

[root@db1 grid]# cd /grid/11.2.0/grid/crs/install/

[root@db1 install]# ./rootcrs.pl -deconfig -force

Using configuration parameter file: ./crsconfig_params

PRCR-1119 : Failed to look up CRS resources of ora.cluster_vip_net1.type type

PRCR-1068 : Failed to query resources

Cannot communicate with crsd

PRCR-1070 : Failed to check if resource ora.gsd is registered

Cannot communicate with crsd

PRCR-1070 : Failed to check if resource ora.ons is registered

Cannot communicate with crsd

CRS-4535: Cannot communicate with Cluster Ready Services

CRS-4000: Command Stop failed, or completed with errors.

CRS-4544: Unable to connect to OHAS

CRS-4000: Command Stop failed, or completed with errors.

Removing Trace File Analyzer

Successfully deconfigured Oracle clusterware stack on this node

--节点2[root@db2 grid]# cd /grid/11.2.0/grid/crs/install/

[root@db2 install]# ./rootcrs.pl -deconfig -force -lastnode --等一等比较慢~~

Successfully deconfigured Oracle clusterware stack on this node

可以查看d.bin的进程,会一点点的杀掉[grid@db2 ~]$ ps -ef|grep d.bin

root 23797 1 0 20:08 ? 00:00:04 /grid/11.2.0/grid/bin/ohasd.bin exclusive

grid 23917 1 0 20:08 ? 00:00:03 /grid/11.2.0/grid/bin/oraagent.bin

grid 23939 1 0 20:08 ? 00:00:00 /grid/11.2.0/grid/bin/gpnpd.bin

grid 23951 1 0 20:08 ? 00:00:02 /grid/11.2.0/grid/bin/gipcd.bin

root 26225 1 0 20:20 ? 00:00:00 /grid/11.2.0/grid/bin/cssdmonitor

root 26239 1 0 20:20 ? 00:00:00 /grid/11.2.0/grid/bin/cssdagent

grid 26261 1 0 20:20 ? 00:00:00 /grid/11.2.0/grid/bin/ocssd.bin -X

root 26295 1 0 20:20 ? 00:00:00 /grid/11.2.0/grid/bin/orarootagent.bin

root 26308 1 0 20:20 ? 00:00:00 /grid/11.2.0/grid/bin/octssd.bin

root 26839 23348 0 20:21 pts/0 00:00:00 /grid/11.2.0/grid/bin/crsctl.bin stop crs -f

grid 26850 24596 0 20:22 pts/3 00:00:00 grep d.bin

[grid@db2 ~]$ ps -ef|grep d.bin

grid 13066 24596 0 20:23 pts/3 00:00:00 grep d.bin

7 需要执行.sh脚本,创建集群--节点1

[root@db1 install]# cd /grid/11.2.0/grid/

[root@db1 grid]# ./root.shPerforming root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= grid

ORACLE_HOME= /grid/11.2.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

The contents of "dbhome" have not changed. No need to overwrite.

The contents of "oraenv" have not changed. No need to overwrite.

The contents of "coraenv" have not changed. No need to overwrite.

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Using configuration parameter file: /grid/11.2.0/grid/crs/install/crsconfig_params

User ignored Prerequisites during installation

Installing Trace File Analyzer

OLR initialization - successful

Adding Clusterware entries to upstart

CRS-2672: Attempting to start 'ora.mdnsd' on 'db1'

CRS-2676: Start of 'ora.mdnsd' on 'db1' succeeded

CRS-2672: Attempting to start 'ora.gpnpd' on 'db1'

CRS-2676: Start of 'ora.gpnpd' on 'db1' succeeded

CRS-2672: Attempting to start 'ora.cssdmonitor' on 'db1'

CRS-2672: Attempting to start 'ora.gipcd' on 'db1'

CRS-2676: Start of 'ora.cssdmonitor' on 'db1' succeeded

CRS-2676: Start of 'ora.gipcd' on 'db1' succeeded

CRS-2672: Attempting to start 'ora.cssd' on 'db1'

CRS-2672: Attempting to start 'ora.diskmon' on 'db1'

CRS-2676: Start of 'ora.diskmon' on 'db1' succeeded

CRS-2676: Start of 'ora.cssd' on 'db1' succeeded

ASM created and started successfully.

Disk Group ocr created successfully.

clscfg: -install mode specified

Successfully accumulated necessary OCR keys.

Creating OCR keys for user 'root', privgrp 'root'..

Operation successful.

Successful addition of voting disk 79d9cdaeb20c4fe4bf71b30812786545.

Successful addition of voting disk 3951a19969fd4f46bf31f280afb3d27b.

Successful addition of voting disk 07701a5c6d284f95bfb101cb0887212c.

Successfully replaced voting disk group with +ocr.

CRS-4266: Voting file(s) successfully replaced

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. ONLINE 79d9cdaeb20c4fe4bf71b30812786545 (/dev/asm-diskb) [OCR]

2. ONLINE 3951a19969fd4f46bf31f280afb3d27b (/dev/asm-diskc) [OCR]

3. ONLINE 07701a5c6d284f95bfb101cb0887212c (/dev/asm-diskd) [OCR]

Located 3 voting disk(s).

clscfg: EXISTING configuration version 5 detected.

clscfg: version 5 is 11g Release 2.

Successfully accumulated necessary OCR keys.

Creating OCR keys for user 'root', privgrp 'root'..

Operation successful.

Unable to get VIP info for new node at /grid/11.2.0/grid/crs/install/crsconfig_lib.pm line 9008.

/grid/11.2.0/grid/perl/bin/perl -I/grid/11.2.0/grid/perl/lib -I/grid/11.2.0/grid/crs/install /grid/11.2.0/grid/crs/install/rootcrs.pl execution failed

--没有监听资源

--节点2

[root@db2 install]# cd /grid/11.2.0/grid/

[root@db2 grid]# ./root.sh

Performing root user operation for Oracle 11g

The following environment variables are set as:

ORACLE_OWNER= grid

ORACLE_HOME= /grid/11.2.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

The contents of "dbhome" have not changed. No need to overwrite.

The contents of "oraenv" have not changed. No need to overwrite.

The contents of "coraenv" have not changed. No need to overwrite.

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Using configuration parameter file: /grid/11.2.0/grid/crs/install/crsconfig_params

User ignored Prerequisites during installation

Installing Trace File Analyzer

OLR initialization - successful

Adding Clusterware entries to upstart

CRS-4402: The CSS daemon was started in exclusive mode but found an active CSS daemon on node db1, number 1, and is terminating

An active cluster was found during exclusive startup, restarting to join the cluster

Preparing packages for installation...

cvuqdisk-1.0.9-1

Configure Oracle Grid Infrastructure for a Cluster ... succeeded

验证[root@db2 grid]# crsctl check crs

CRS-4638: Oracle High Availability Services is online

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

8 由于上面的ocr用的独占模式,所以要停掉再启动[root@db1 grid]# crsctl stop crs -f[root@db1 grid]# crsctl start crs --等一等

[root@db1 grid]# crsctl check crs

CRS-4638: Oracle High Availability Services is online

CRS-4537: Cluster Ready Services is online

CRS-4529: Cluster Synchronization Services is online

CRS-4533: Event Manager is online

[root@db1 grid]# crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.gsd

OFFLINE OFFLINE db1

OFFLINE OFFLINE db2

ora.net1.network

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.ons

ONLINE ONLINE db1

ONLINE ONLINE db2

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.db2.vip

1 ONLINE ONLINE db2

9 添加集群资源

--本地监听(grid)先查看监听资源

[grid@db1 ~]$ srvctl config listener

PRCN-2044 : No listener exists添加监听,并启动

[grid@db1 ~]$ srvctl add listener -l listener

[grid@db1 ~]$ crsctl start resource ora.LISTENER.lsnr

CRS-2672: Attempting to start 'ora.LISTENER.lsnr' on 'db2'

CRS-5016: Process "/grid/11.2.0/grid/bin/lsnrctl" spawned by agent "/grid/11.2.0/grid/bin/oraagent.bin" for action "start" failed: details at "(:CLSN00010:)" in "/grid/11.2.0/grid/log/db2/agent/crsd/oraagent_grid/oraagent_grid.log"

CRS-2676: Start of 'ora.LISTENER.lsnr' on 'db2' succeeded

[grid@db1 ~]$ srvctl config listener

Name: LISTENER

Network: 1,

Owner: grid -- 一定要是grid,不然后面添加database的时候报错

Home: <CRS home>

End points: TCP:1521

[grid@db1 ~]$ crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE

OFFLINE db1

ONLINE ONLINE db2

ora.gsd

OFFLINE OFFLINE db1

OFFLINE OFFLINE db2

ora.net1.network

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.ons

ONLINE ONLINE db1

ONLINE ONLINE db2

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.db2.vip

1 ONLINE ONLINE db2

节点2 也需要添加[grid@db2 ~]$ srvctl add listener -l listener

--添加scan ip先查看scan的配置

[grid@db1 ~]$ srvctl config scan

PRCS-1025 : Could not find Single Client Access Name Virtual Internet Protocol(VIP) resources TYPE=ora.scan_vip.type添加scan ,并启动

[grid@db1 ~]$ srvctl add scan -n scanip

PRCN-2018 : Current user grid is not a privileged user

[grid@db1 ~]$ su root

Password:

[root@db1 grid]# srvctl add scan -n scanip

[root@db1 grid]# srvctl start scan验证

[root@db1 grid]# srvctl config scan

SCAN name: scanip, Network: 1/192.168.56.0/255.255.255.0/eth0

SCAN VIP name: scan1, IP: /scanip/192.168.56.200

[root@db1 grid]# crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE OFFLINE db1

ONLINE ONLINE db2

ora.gsd

OFFLINE OFFLINE db1

OFFLINE OFFLINE db2

ora.net1.network

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.ons

ONLINE ONLINE db1

ONLINE ONLINE db2

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.db2.vip

1 ONLINE ONLINE db2

ora.scan1.vip

1 ONLINE ONLINE db1

发现节点1缺少vip,所以给节点1添加vip[root@db1 grid]# srvctl add vip -n db1 -A db1_vip/255.255.255.0/eth0 -k 1[root@db1 grid]# srvctl start vip -n db1

[root@db1 grid]# crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.gsd

OFFLINE OFFLINE db1

OFFLINE OFFLINE db2

ora.net1.network

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.ons

ONLINE ONLINE db1

ONLINE ONLINE db2

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.db1.vip

1 ONLINE ONLINE db1

ora.db2.vip

1 ONLINE ONLINE db2

ora.scan1.vip

1 ONLINE ONLINE db1

--添加database[root@db1 ~]# su - oracle

[oracle@db1 ~]$ echo $ORACLE_HOME

/oracle/dbhome_1

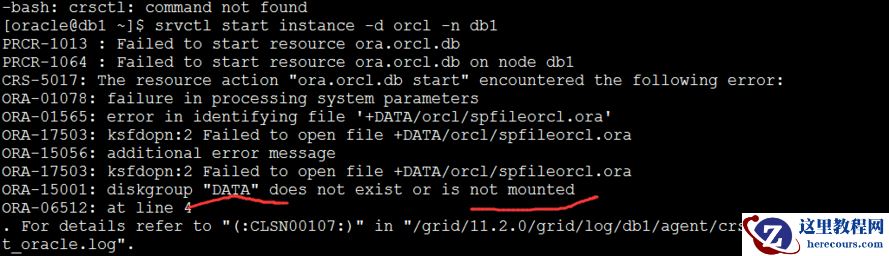

[oracle@db1 ~]$ srvctl add database -d orcl -o /oracle/dbhome_1 -p +DATA/orcl/spfileorcl.ora -c RAC启动,但是告诉没有实例,添加实例

[oracle@db1 ~]$ srvctl start database -d orcl

PRKO-3119 : Database orcl cannot be started since it has no configured instances.

[oracle@db1 ~]$ srvctl add instance -d orcl -i orcl1 -n db1[oracle@db1 ~]$ srvctl add instance -d orcl -i orcl2 -n db2启动实例[oracle@db1 ~]$ srvctl start instance -d orcl -n db1

报错原因,data磁盘组没有mount,去查看一下SQL> select group_number,name,state,type from v$asm_diskgroup;

GROUP_NUMBER NAME STATE TYPE

------------ ------------------------------ ----------- ------

1 OCR MOUNTED NORMAL

0 DATA DISMOUNTED

0 ARCH DISMOUNTED

SQL> alter diskgroup data mount;

SQL> alter diskgroup arch mount;

节点2 ,也需要同样的mount,不然启动实例还是报错

SQL> alter diskgroup data mount;

SQL> alter diskgroup arch mount;

或者当时添加完ocr磁盘组的时候,修改参数asm_diskgroups,重启集群的时候,就不会DISMOUNTEDSQL> show parameter asm

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

asm_diskgroups string DATA, ARCH

asm_diskstring string /dev/asm-*

asm_power_limit integer 1

asm_preferred_read_failure_groups stringSQL> alter system set asm_diskgroups ='DATA, ARCH,ORC';

[oracle@db1 ~]$ srvctl start instance -d orcl -n db1

[oracle@db1 ~]$ srvctl start instance -d orcl -n db2

[grid@db1 grid]$ crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.gsd

OFFLINE OFFLINE db1

OFFLINE OFFLINE db2

ora.net1.network

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.ons

ONLINE ONLINE db1

ONLINE ONLINE db2

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.db1.vip

1 ONLINE ONLINE db1

ora.db2.vip

1 ONLINE ONLINE db2

ora.orcl.db

1 ONLINE ONLINE db1 Open

2 ONLINE ONLINE db2 Open

ora.scan1.vip

1 ONLINE ONLINE db2

完成

报错原因,data磁盘组没有mount,去查看一下SQL> select group_number,name,state,type from v$asm_diskgroup;

GROUP_NUMBER NAME STATE TYPE

------------ ------------------------------ ----------- ------

1 OCR MOUNTED NORMAL

0 DATA DISMOUNTED

0 ARCH DISMOUNTED

SQL> alter diskgroup data mount;

SQL> alter diskgroup arch mount;

节点2 ,也需要同样的mount,不然启动实例还是报错

SQL> alter diskgroup data mount;

SQL> alter diskgroup arch mount;

或者当时添加完ocr磁盘组的时候,修改参数asm_diskgroups,重启集群的时候,就不会DISMOUNTEDSQL> show parameter asm

NAME TYPE VALUE

------------------------------------ ----------- ------------------------------

asm_diskgroups string DATA, ARCH

asm_diskstring string /dev/asm-*

asm_power_limit integer 1

asm_preferred_read_failure_groups stringSQL> alter system set asm_diskgroups ='DATA, ARCH,ORC';

[oracle@db1 ~]$ srvctl start instance -d orcl -n db1

[oracle@db1 ~]$ srvctl start instance -d orcl -n db2

[grid@db1 grid]$ crsctl stat res -t

--------------------------------------------------------------------------------

NAME TARGET STATE SERVER STATE_DETAILS

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.gsd

OFFLINE OFFLINE db1

OFFLINE OFFLINE db2

ora.net1.network

ONLINE ONLINE db1

ONLINE ONLINE db2

ora.ons

ONLINE ONLINE db1

ONLINE ONLINE db2

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.db1.vip

1 ONLINE ONLINE db1

ora.db2.vip

1 ONLINE ONLINE db2

ora.orcl.db

1 ONLINE ONLINE db1 Open

2 ONLINE ONLINE db2 Open

ora.scan1.vip

1 ONLINE ONLINE db2

完成

编辑推荐:

相关推荐

-

雷神推出 MIX PRO II 迷你主机:基于 Ultra 200H,玻璃上盖 + ARGB 灯效

2 月 9 日消息,雷神 (THUNDEROBOT) 现已宣布推出基于英

-

制造商 Musnap 推出彩色墨水屏电纸书 Ocean C:支持手写笔、第三方安卓应用

2 月 10 日消息,制造商 Musnap 现已在海外推出一款 Oce