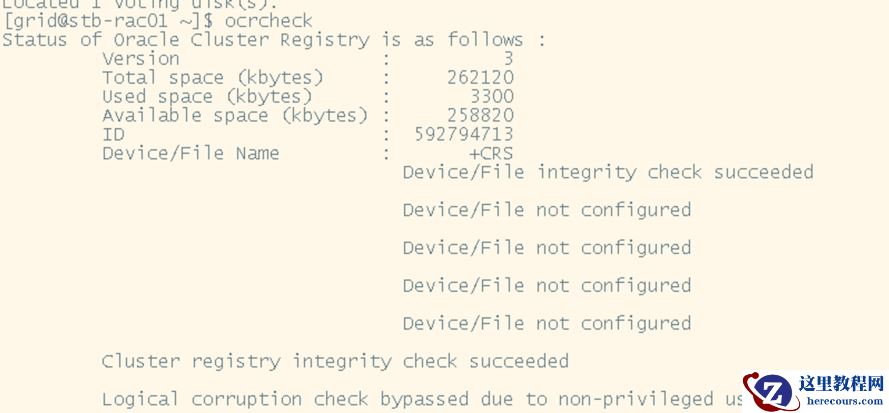

二节点在重启后操作系统损坏,需要重构,下面将描述重构方法 一、清除集群上二节点的节点信息 1、删除实例dbca或静默:[oracle@rac1 ~]$ dbca -silent -deleteinstance -nodelist rac2 -gdbname orcl -instancename orcl2 -sysdbausername sys -sysdbapassword oracledbca-实例管理-删除节实例-选择服务输入密码-选择inactive实例-确认删除 2、查看数据库实例情况[oracle@rac1 ~]$ srvctl config database -d orclDatabase unique name: orclDatabase name: orclOracle home: /oracle/app/product/11.2.0/db_1Oracle user: oracleSpfile: +DATA/orcl/spfileorcl.oraDomain:Start options: openStop options: immediateDatabase role: PRIMARYManagement policy: AUTOMATICServer pools: orclDatabase instances: orcl1Disk Groups: DATAServices:Database is administrator managedsqlplus / as sysdbaSQL> select inst_id,instance_name from gv$instance;INST_ID INSTANCE_NAME

1 orcl1 3、在保留节点使用oracle用户更新集群列表[oracle@rac1 ~]$ $ORACLE_HOME/oui/bin/runInstaller -updateNodeList ORACLE_HOME=$ORACLE_HOME "CLUSTER_NODES={rac1}"Starting Oracle Universal Installer...Checking swap space: must be greater than 500 MB. Actual 8191 MB PassedThe inventory pointer is located at /etc/oraInst.locThe inventory is located at /oracle/oraInventory'UpdateNodeList' was successful. 4、移除集群中二节点的VIP停止二节点VIP:cd $GRID_HOME/bin[root@rac1 bin]# ./srvctl stop vip -i rac2-vip删除二节点VIP:[root@rac1 bin]#./srvctl remove vip -i rac2-vip -f 5、查看节点状态查看集群状态[grid@rac1 ~]$crs_stat -t[grid@rac1 ~]$crsctl stat res -t可以看到其中关于二节点的VIP信息已被删除查看集群节点信息[grid@rac1 ~]$ olsnodes -s -trac1 Active Unpinnedrac2 Inactive Unpinned(如果二节点是ping状态,需要执行这步:[grid@rac1 ~]$crsctl unpin css -n rac2) 6、删除节点[root@rac1 bin]# $GRID_HOME/bin/crsctl delete node -n rac2CRS-4661: Node rac2 successfully deleted.7、更新GI的inventory信息su - girdcd $ORACLE_HOME/oui/bin[grid@rac1 bin]$ ./runInstaller -updateNodeList ORACLE_HOME=$ORACLE_HOME "CLUSTER_NODES={rac1}" CRS=TRUE -localStarting Orac2e Universal Installer...Checking swap space: must be greater than 500 MB. Actual 8191 MB PassedThe inventory pointer is located at /etc/oraInst.locThe inventory is located at /oracle/oraInventory'UpdateNodeList' was successful. 二:重新添加二节点到集群中 1、前提条件(1):添加相应的用户和组,用户及用户组ID相同(2):配置 hosts文件 ,新增节点和原有都配置为相同(3):配置系统参数,用户参数和原有节点一样,配置网络(4):创建相应的目录,并保证权限对应(根据实际情况创建目录,非常重要)mkdir /oracle/appmkdir /oracle/grid/crs_1mkdir /oracle/gridbasemkdir /oracle/oraInventorychown oracle:oinstall /oracle/appchown grid:oinstall /oracle/grid/chown grid:oinstall /oracle/grid/crs_1chown grid:oinstall /oracle/gridbasechown grid:oinstall /oracle/oraInventorychmod 770 /oracle/oraInventory5)检查多路径,盘权限 2、配置用户之间的SSH、安装集群rpm包:到grid软件解压目录下:[root@rac1 sshsetup]# cd /oracle/grid/grid/sshsetupgrid用户的ssh:./sshUserSetup.sh -user grid -hosts "rac1 rac2" -advanced –noPromptPassphraseoracle用户的ssh:./sshUserSetup.sh -user oracle -hosts "rac1 rac2" -advanced –noPromptPassphrase将grid软件目录下的rpm包传到二节点:[grid@rac1 ~]$scp cvuqdisk-1.0.9-1.rpm 192.168.40.102:/home/grid二节点安装rpm包:[grid@rac2 ~]$ rpm-ivh cvuqdisk-1.0.9-1.rpm若你没有oinstall组,安装可能报错。此时手动export DISKGROUP=dba 3、检查rac2是否满足rac安装条件1.检查网络和存储:[grid@racdb1 ~]$ cluvfy stage -post hwos -n rac2Check: TCP connectivity of subnet "10.0.0.0"Source Destination Connected?

rac1:192.168.40.101 rac2:10.0.0.3 failedERROR:PRVF-7617 : Node connectivity between "rac1 : 192.168.40.101" and "rac2 : 10.0.0.3" failedResult: TCP connectivity check failed for subnet "10.0.0.0"Result: Node connectivity check failed若出现上述报错,可忽略。2.检查rpm包、磁盘空间等:[grid@racdb1 ~]$ cluvfy comp peer -n rac23.整体检查[grid@racdb1 ~]$ cluvfy stage -pre nodeadd -n rac2 -fixup -verbose 4、添加节点grid用户在grid_home/oui/bin目录下执行:忽略addnote的时候进行的自检(因为我们不使用DNS和NTP,若addnode的时候自检不通过,则无法增加节点)执行前删除日志小文件,特别是审计日志、trace日志,不然复制很慢export IGNORE_PREADDNODE_CHECKS=Y[grid@rac1 bin]$ /oracle/grid/crs_1/oui/bin/addNode.sh -silent "CLUSTER_NEW_NODES={rac2}" "CLUSTER_NEW_VIRTUAL_HOSTNAMES={rac2-vip}" "CLUSTER_NEW_PRIVATE_NODE_NAMES={rac2-priv}"Each script in the list below is followed by a list of nodes./oracle/oraInventory/orainstRoot.sh #On nodes rac2/oracle/grid/crs_1/root.sh #On nodes rac2To execute the configuration scripts:

Open a terminal window

Log in as "root"

Run the scripts in each cluster node

The Cluster Node Addition of /oracle/grid/crs_1 was unsuccessful.Please check '/tmp/silentInstall.log' for more details.跑以上提示脚本(1)[root@rac2 oracle]# /oracle/oraInventory/orainstRoot.sh(2)[root@rac2 oracle]# /oracle/grid/crs_1/root.shPerforming root user operation for Oracle 11gThe following environment variables are set as:ORACLE_OWNER= gridORACLE_HOME= /oracle/grid/crs_1Enter the full pathname of the local bin directory: [/usr/local/bin]:Copying dbhome to /usr/local/bin ...Copying oraenv to /usr/local/bin ...Copying coraenv to /usr/local/bin ...Creating /etc/oratab file...Entries will be added to the /etc/oratab file as needed byDatabase Configuration Assistant when a database is createdFinished running generic part of root script.Now product-specific root actions will be performed.Using configuration parameter file: /oracle/grid/crs_1/crs/install/crsconfig_paramsCreating trace directoryUser ignored Prerequisites during installationInstalling Trace File AnalyzerOLR initialization - successfulAdding Clusterware entries to upstartCRS-4402: The CSS daemon was started in exclusive mode but found an active CSS daemon on node rac1, number 1, and is terminatingAn active cluster was found during exclusive startup, restarting to join the clusterclscfg: EXISTING configuration version 5 detected.clscfg: version 5 is 11g Release 2.Successfully accumulated necessary OCR keys.Creating OCR keys for user 'root', privgrp 'root'..Operation successful.PRKO-2190 : VIP exists for node rac2, VIP name rac2-vipPRKO-2420 : VIP is already started on node(s): rac2Configure Oracle Grid Infrastructure for a Cluster ... succeeded 5、添加新节点数据库:(在一节点操作)oracle用户:cd $ORACLE_HOME/oui/bin[oracle@rac1 bin]$./addNode.sh -silent "CLUSTER_NEW_NODES={rac2}"二节点运行脚本:[root@rac2 oracle]# /oracle/app/product/11.2.0/db_1/root.shPerforming root user operation for Oracle 11gThe following environment variables are set as:ORACLE_OWNER= oracleORACLE_HOME= /oracle/app/product/11.2.0/db_1Enter the full pathname of the local bin directory: [/usr/local/bin]:The contents of "dbhome" have not changed. No need to overwrite.The contents of "oraenv" have not changed. No need to overwrite.The contents of "coraenv" have not changed. No need to overwrite.Entries will be added to the /etc/oratab file as needed byDatabase Configuration Assistant when a database is createdFinished running generic part of root script.Now product-specific root actions will be performed.Finished product-specific root actions. 6、创建二节点实例(在一节点操作)Dg库需要修改db_unique_name和db_name一致,不然会报错,等加好实例再把db_unique_name修改回去[oracle@rac1 bin]$ dbca -silent -addInstance -nodeList rac2 -gdbName orcl -instanceName orcl2 -sysDBAUserName sys -sysDBAPassword "oracle"Adding instance1% complete2% complete6% complete13% complete20% complete27% complete28% complete34% complete41% complete48% complete54% complete66% completeCompleting instance management.76% complete100% completeLook at the log file "/oracle/app/cfgtoollogs/dbca/orcl/orcl.log" for further details. 7、验证集群状态等[grid@rac2 ~]$ crs_stat -tName Type Target State Host

ora.DATA.dg ora....up.type ONLINE ONLINE rac1ora....ER.lsnr ora....er.type ONLINE ONLINE rac1ora....N1.lsnr ora....er.type ONLINE ONLINE rac1ora.OCRVOT.dg ora....up.type ONLINE ONLINE rac1ora.asm ora.asm.type ONLINE ONLINE rac1ora.cvu ora.cvu.type ONLINE ONLINE rac1ora.gsd ora.gsd.type OFFLINE OFFLINEora....network ora....rk.type ONLINE ONLINE rac1ora.oc4j ora.oc4j.type ONLINE ONLINE rac1ora.ons ora.ons.type ONLINE ONLINE rac1ora.orcl.db ora....se.type ONLINE ONLINE rac1ora....SM1.asm application ONLINE ONLINE rac1ora....C1.lsnr application ONLINE ONLINE rac1ora.rac1.gsd application OFFLINE OFFLINEora.rac1.ons application ONLINE ONLINE rac1ora.rac1.vip ora....t1.type ONLINE ONLINE rac1ora....SM2.asm application ONLINE ONLINE rac2ora....C2.lsnr application ONLINE ONLINE rac2ora.rac2.gsd application OFFLINE OFFLINEora.rac2.ons application ONLINE ONLINE rac2ora.rac2.vip ora....t1.type ONLINE ONLINE rac2ora....ry.acfs ora....fs.type ONLINE ONLINE rac1ora.scan1.vip ora....ip.type ONLINE ONLINE rac1[grid@rac2 ~]$ srvctl status db -d orclInstance orcl1 is running on node rac1Instance orcl2 is running on node rac2SQL> select inst_id,status from gv$instance;INST_ID STATUS

3 OPEN1 OPENSQL> select open_mode from v$database;OPEN_MODE

READ WRITE