术语介绍: PV(Physical Volume)- 物理卷 物理卷在逻辑卷管理中处于最底层,它可以是实际物理硬盘上的分区,也可以是整个物理硬盘 VG(Volumne Group)- 卷组 卷组建立在物理卷之上,一个卷组中至少要包括一个物理卷,在卷组建立之后可动态添加物理卷到卷组中。 一个逻辑卷管理系统工程中可以只有一个卷组,也可以拥有多个卷组。 LV(Logical Volume)- 逻辑卷 逻辑卷建立在卷组之上,卷组中的未分配空间可以用于建立新的逻辑卷,逻辑卷建立后可以动态地扩展和缩小空间。 系统中的多个逻辑卷可以属于同一个卷组,也可以属于不同的多个卷组。方法:1.划分lvm和扩大lvm1.1)创建pv#fdisk /dev/sdc#pvcreate /dev/sdc1Physical volume "/dev/sdc1" successfully created. 1.2.1)创建vg#vgcreate vg2020 /dev/sdc1Volume group "vg2020" successfully created#vgdisplay 查看Free PE Free PE / Size 76799 / <300.00 GiB1.2.2)删除vg 1.3)创建lv#lvcreate -L 299G -n LV20 vg2020 Logical volume "LV20" created.或注意是小写L#lvcreate -l 100%VG -n LV20 vg2020#lvscanACTIVE '/dev/vg2020/LV20' [299.00 GiB] inherit1.4)移除lv# lvremove /dev/vg2020/LV20 1.5)格式分区#mkfs.xfs /dev/vg2020/LV20 1.2)添加umount最好提前做好备份(有丢失数据的风险)#umount /data如果busy执行下面的语句# fuser -mv -k /data#umount /data#fdisk /dev/sdd#pvcreate /dev/sdd将/dev/sdd1添加到vg2020卷组中#vgextend vg2020 /dev/sdd1查看大小#pvdisplay#lvscan 或lvdisplay 查看路径ACTIVE '/dev/vg2020/LV20' [299.00 GiB] inherit#mount /dev/vg2020/LV20 /data增加逻辑卷100G#lvextend -L +100G /dev/vg2020/LV20此时/data文件大小并没有变,还需使用resize2fs,重新定义文件系统大小#resize2fs /dev/vg2020/LV20#vi /etc/fstab/dev/vg2020/LV20 /data xfs defaults 0 0 举例:扩根目录 第一步: # lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT sdd 8:48 0 20G 0 disk └─36000c296031e888f0c9e24bb1e795af3 252:3 0 20G 0 mpath sdb 8:16 0 18G 0 disk ├─sdb1 8:17 0 18G 0 part └─36000c29c2ef061b8f51bf86ee5438cf0 252:4 0 18G 0 mpath └─36000c29c2ef061b8f51bf86ee5438cf0p1 252:6 0 18G 0 part sr0 11:0 1 1024M 0 rom sdc 8:32 0 10G 0 disk ├─sdc1 8:33 0 10G 0 part └─36000c2928512a9d98974d590cf8709b5 252:2 0 10G 0 mpath └─36000c2928512a9d98974d590cf8709b5p1 252:5 0 10G 0 part sda 8:0 0 45G 0 disk ├─sda2 8:2 0 34.2G 0 part │ ├─ol-swap 252:1 0 4G 0 lvm [SWAP] │ └─ol-root 252:0 0 30.2G 0 lvm / ├─sda3 8:3 0 10G 0 part └─sda1 8:1 0 800M 0 part /boot 第二步: #fdisk /dev/sda 这里直接物理上扩的sda盘 第三步: #pvcreate /dev/sda3 第四步: # lvscan ACTIVE '/dev/ol/swap' [4.00 GiB] inherit ACTIVE '/dev/ol/root' [30.21 GiB] inherit 第五步: # vgscan Reading volume groups from cache. Found volume group "ol" using metadata type lvm2 第六步 # vgextend ol /dev/sda3 Volume group "ol" successfully extended 第七步: #vgdisplay 上步可以看到free,然后下面可添加 #lvextend -L +9.8G 100%FREE Rounding size to boundary between physical extents: 9.80 GiB. Size of logical volume ol/root changed from 30.21 GiB (7735 extents) to <40.02 GiB (10244 extents). Logical volume ol/root successfully resized. 或 #lvextend -L +100%FREE 100%FREE #lvextend -l +100%FREE -n $pwd_group 第八步: # resize2fs /dev/ol/root resize2fs 1.42.9 (28-Dec-2013) resize2fs: Bad magic number in super-block while trying to open /dev/ol/root Couldn't find valid filesystem superblock. 如果不是xfs会如上报错,用如下命令即可 [root@rac2 ~]# xfs_growfs /dev/ol/root meta-data=/dev/mapper/ol-root isize=256 agcount=4, agsize=1980160 blks = sectsz=512 attr=2, projid32bit=1 = crc=0 finobt=0 spinodes=0 data = bsize=4096 blocks=7920640, imaxpct=25 = sunit=0 swidth=0 blks naming =version 2 bsize=4096 ascii-ci=0 ftype=1 log =internal bsize=4096 blocks=3867, version=2 = sectsz=512 sunit=0 blks, lazy-count=1 realtime =none extsz=4096 blocks=0, rtextents=0 data blocks changed from 7920640 to 10489856 # df -hFilesystem Size Used Avail Use% Mounted ondevtmpfs 2.4G 0 2.4G 0% /devtmpfs 2.4G 131M 2.3G 6% /dev/shmtmpfs 2.4G 9.4M 2.4G 1% /runtmpfs 2.4G 0 2.4G 0% /sys/fs/cgroup/dev/mapper/ol-root 41G 28G 13G 68% //dev/sda1 797M 217M 580M 28% /boottmpfs 483M 12K 483M 1% /run/user/42tmpfs 483M 0 483M 0% /run/user/0 可以看到已扩成功。 挂载之后记得要写入开机文本中#df -h 看空间是否增加#reboot 看是否自动挂载其它命令可参考如下 思路: 1、添加空间,然后将磁盘修改为lvm,然后将u01目录迁移过去 2、有空余空间可作为创建lvm分区,然后迁移 现场是/home目录有足够的空间,/u01是单独的挂载目录,所以考虑将u01目录打包发送到/home目录下,然后将u01重新设置成lvm分区 详细操作: 1、查看pvcreate命令 [root@tsdb01 bin]# pvcreate -bash: pvcreate: command not found 没有这个命令,即通过yum进行安装(两节点) # yum -y install lvm* 2.停数据库、集群、监听(两节点) 3.备份u01目录到/home/bak下(两节点) #mkdir -p /home/bak #cd /home/bak #tar -zcvf u01.tar.gz /u01 查看blkid 节点1 [root@tsdb01 home]# blkid /dev/vda2: UUID="e4bc0543-19de-4c04-8667-6f6a9e09f7c9" TYPE="xfs" /dev/vda1: UUID="28de0b80-fb53-4bd2-96e3-02d15135385f" TYPE="xfs" /dev/vda3: UUID="ea09e041-0a0a-4be1-a77b-aac6d04632cf" TYPE="swap" /dev/vda5: UUID="65972b46-175b-47b5-ad70-700b282a7781" TYPE="xfs" /dev/vdb1: UUID="748892f0-dcb0-4511-9b30-133d746dfde3" TYPE="xfs" /dev/sr0: UUID="2018-11-25-23-54-16-00" LABEL="CentOS 7 x86_64" TYPE="iso9660" PTTYPE="dos" /dev/sde1: TYPE="oracleasm" /dev/sdb1: TYPE="oracleasm" /dev/sdh1: TYPE="oracleasm" /dev/sdd1: TYPE="oracleasm" /dev/sda1: TYPE="oracleasm" /dev/sdg1: TYPE="oracleasm" /dev/sdc1: TYPE="oracleasm" /dev/sdf1: TYPE="oracleasm" 节点2 [root@tsdb02 home]# blkid /dev/vda2: UUID="9ac9aa76-6847-4e8c-81ff-53bf2c0bc367" TYPE="xfs" /dev/vda1: UUID="a212255f-464e-46b4-99dc-2ad8d5baffdf" TYPE="xfs" /dev/vda3: UUID="dd1566ef-1ea5-4d43-a525-ce79ffaef956" TYPE="swap" /dev/vda5: UUID="f1e4ce93-4d16-464c-86de-b391167f662b" TYPE="xfs" /dev/vdb1: UUID="a4be0692-e0b6-44e7-bb30-d7e4ee806ff0" TYPE="xfs" /dev/sr0: UUID="2018-11-25-23-54-16-00" LABEL="CentOS 7 x86_64" TYPE="iso9660" PTTYPE="dos" /dev/sdc1: TYPE="oracleasm" /dev/sdh1: TYPE="oracleasm" /dev/sde1: TYPE="oracleasm" /dev/sdb1: TYPE="oracleasm" /dev/sdg1: TYPE="oracleasm" /dev/sdd1: TYPE="oracleasm" /dev/sdf1: TYPE="oracleasm" /dev/sda1: TYPE="oracleasm" 4.fdisk -l查看分区情况 节点1 Disk /dev/vdb: 322.1 GB, 322122547200 bytes, 629145600 sectors Device Boot Start End Blocks Id System /dev/vdb1 2048 629145599 314571776 83 Linux 节点2 Disk /dev/vdb: 322.1 GB, 322122547200 bytes, 629145600 sectors Device Boot Start End Blocks Id System /dev/vdb1 2048 629145599 314571776 83 Linux 5.重新划分空间 节点1 先umount # fuser -mv -k /u01 USER PID ACCESS COMMAND /u01: root kernel mount /u01 grid 13435 F.c.. sleep grid 13500 F.c.. sleep root 30940 F..e. java [root@tsdb01 bak]# umount /u01 #fdisk /dev/vdb [root@tsdb01 ~]# fdisk /dev/vdb Welcome to fdisk (util-linux 2.23.2). Changes will remain in memory only, until you decide to write them. Be careful before using the write command. Command (m for help): q [root@tsdb01 ~]# fdisk /dev/vdb Welcome to fdisk (util-linux 2.23.2). Changes will remain in memory only, until you decide to write them. Be careful before using the write command. Command (m for help): d Selected partition 1 Partition 1 is deleted Command (m for help): n Partition type: p primary (0 primary, 0 extended, 4 free) e extended Select (default p): p Partition number (1-4, default 1): First sector (2048-629145599, default 2048): Using default value 2048 Last sector, +sectors or +size{K,M,G} (2048-629145599, default 629145599): Using default value 629145599 Partition 1 of type Linux and of size 300 GiB is set Command (m for help): w The partition table has been altered! Calling ioctl() to re-read partition table. WARNING: Re-reading the partition table failed with error 16: Device or resource busy. The kernel still uses the old table. The new table will be used at the next reboot or after you run partprobe(8) or kpartx(8) Syncing disks. 节点2 先umount # fuser -mv -k /u01 USER PID ACCESS COMMAND /u01: root kernel mount /u01 grid 13435 F.c.. sleep grid 13500 F.c.. sleep root 30940 F..e. java [root@tsdb02 bak]# umount /u01 # fdisk /dev/vdb 6.建立lvm分区并查看 节点1:因之前没有umount报如下错误 #pvcreate /dev/vdb1 [root@tsdb01 ~]# pvcreate /dev/vdb1 Device vg2020 not found. Can't open /dev/vdb1 exclusively. Mounted filesystem? Can't open /dev/vdb1 exclusively. Mounted filesystem? [root@tsdb01 ~]# umount /u01 umount: /u01: target is busy. (In some cases useful info about processes that use the device is found by lsof(8) or fuser(1)) [root@tsdb01 /]# umount /u01 umount: /u01: target is busy. (In some cases useful info about processes that use the device is found by lsof(8) or fuser(1)) [root@tsdb01 /]# fuser -mv -k /u01 USER PID ACCESS COMMAND /u01: root kernel mount /u01 ogg 6014 ....m mgr grid 23000 F.c.. OSWatcher.sh grid 24111 F.c.. OSWatcherFM.sh root 31927 F..e. java grid 36355 F.c.. sleep grid 36513 F.c.. sleep 立即 # umount /u01 #pvcreate /dev/vdb1 #vgcreate vg2020 /dev/vdb1 [root@tsdb01 u01]# vgcreate vg2020 /dev/vdb1 WARNING: xfs signature detected on /dev/vdb1 at offset 0. Wipe it? [y/n]: y Wiping xfs signature on /dev/vdb1. Physical volume "/dev/vdb1" successfully created. Volume group "vg2020" successfully created 节点2 [root@tsdb02 bak]# pvcreate /dev/vdb1 Device vg2020 not found. WARNING: xfs signature detected on /dev/vdb1 at offset 0. Wipe it? [y/n]: y Wiping xfs signature on /dev/vdb1. Physical volume "/dev/vdb1" successfully created. 节点1遇到如下错误处理方法 [root@tsdb01 u01]# pvcreate /dev/vdb1 Can't initialize physical volume "/dev/vdb1" of volume group "vg2020" without -ff /dev/vdb1: physical volume not initialized. [root@tsdb01 u01]# vgreduce vg2020 /dev/vdb1 Can't remove final physical volume "/dev/vdb1" from volume group "vg2020" [root@tsdb01 u01]# vg vgcfgbackup vgck vgdisplay vgimport vgmknodes vgrename vgsplit vgcfgrestore vgconvert vgexport vgimportclone vgreduce vgs vgchange vgcreate vgextend vgmerge vgremove vgscan [root@tsdb01 u01]# vgremove vg2020 /dev/vdb1 Volume group "vg2020" successfully removed Volume group "vdb1" not found Cannot process volume group vdb1 [root@tsdb01 u01]# pvcreate /dev/vdb1 Physical volume "/dev/vdb1" successfully created. 节点2 [root@tsdb02 bak]# vgcreate vg2020 /dev/vdb1 Volume group "vg2020" successfully created 节点1 # # vgdisplay --- Volume group --- VG Name vg2020 System ID Format lvm2 Metadata Areas 1 Metadata Sequence No 1 VG Access read/write VG Status resizable MAX LV 0 Cur LV 0 Open LV 0 Max PV 0 Cur PV 1 Act PV 1 VG Size <300.00 GiB PE Size 4.00 MiB Total PE 76799 Alloc PE / Size 0 / 0 Free PE / Size 76799 / <300.00 GiB VG UUID o3f0g5-jgMW-KhIf-v9jk-Kujo-vhY3-Hmff1Y 节点2 [root@tsdb02 bak]# vgdisplay --- Volume group --- VG Name vg2020 System ID Format lvm2 Metadata Areas 1 Metadata Sequence No 1 VG Access read/write VG Status resizable MAX LV 0 Cur LV 0 Open LV 0 Max PV 0 Cur PV 1 Act PV 1 VG Size <300.00 GiB PE Size 4.00 MiB Total PE 76799 Alloc PE / Size 0 / 0 Free PE / Size 76799 / <300.00 GiB VG UUID PU6eiu-x3tq-X3v3-IENR-M9Sn-yQ6O-cHSC7y 节点1 [root@tsdb01 u01]# lvcreate -L 300G -n LV20 vg2020 Volume group "vg2020" has insufficient free space (76799 extents): 76800 required. [root@tsdb01 u01]# lvcreate -L 299G -n LV20 vg2020 Logical volume "LV20" created. 节点2 [root@tsdb02 bak]# lvcreate -L 299G -n LV20 vg2020 Logical volume "LV20" created. 节点1 [root@tsdb01 u01]# lvscan ACTIVE '/dev/vg2020/LV20' [299.00 GiB] inherit # mkfs.xfs /dev/vg2020/LV20 节点2 [root@tsdb02 bak]# lvscan ACTIVE '/dev/vg2020/LV20' [299.00 GiB] inherit [root@tsdb02 bak]# mkfs.xfs /dev/vg2020/LV20 meta-data=/dev/vg2020/LV20 isize=512 agcount=4, agsize=19595264 blks = sectsz=512 attr=2, projid32bit=1 = crc=1 finobt=0, sparse=0 data = bsize=4096 blocks=78381056, imaxpct=25 = sunit=0 swidth=0 blks naming =version 2 bsize=4096 ascii-ci=0 ftype=1 log =internal log bsize=4096 blocks=38272, version=2 = sectsz=512 sunit=0 blks, lazy-count=1 realtime =none extsz=4096 blocks=0, rtextents=0 节点1 [root@tsdb01 ~]# mount /dev/vg2020/LV20 /u01 节点2 [root@tsdb02 bak]# df -h Filesystem Size Used Avail Use% Mounted on /dev/vda2 47G 24G 24G 51% / devtmpfs 32G 0 32G 0% /dev tmpfs 32G 0 32G 0% /dev/shm tmpfs 32G 226M 32G 1% /run tmpfs 32G 0 32G 0% /sys/fs/cgroup /dev/vda5 72G 28G 44G 39% /home /dev/vda1 950M 185M 766M 20% /boot tmpfs 6.3G 0 6.3G 0% /run/user/0 [root@tsdb02 bak]# mount /dev/vg2020/LV20 /u01 [root@tsdb02 bak]# df -h Filesystem Size Used Avail Use% Mounted on /dev/vda2 47G 24G 24G 51% / devtmpfs 32G 0 32G 0% /dev tmpfs 32G 0 32G 0% /dev/shm tmpfs 32G 226M 32G 1% /run tmpfs 32G 0 32G 0% /sys/fs/cgroup /dev/vda5 72G 28G 44G 39% /home /dev/vda1 950M 185M 766M 20% /boot tmpfs 6.3G 0 6.3G 0% /run/user/0 /dev/mapper/vg2020-LV20 299G 33M 299G 1% /u01 节点1 [root@tsdb01 ~]# df -h Filesystem Size Used Avail Use% Mounted on /dev/vda2 47G 40G 7.1G 85% / devtmpfs 32G 0 32G 0% /dev tmpfs 32G 0 32G 0% /dev/shm tmpfs 32G 714M 31G 3% /run tmpfs 32G 0 32G 0% /sys/fs/cgroup /dev/vda5 72G 23G 50G 32% /home /dev/vda1 950M 185M 766M 20% /boot tmpfs 6.3G 0 6.3G 0% /run/user/0 /dev/mapper/vg2020-LV20 299G 33M 299G 1% /u01 节点2: 修改/etc/fstab 原: [root@tsdb02 bak]# cat /etc/fstab # # /etc/fstab # Created by anaconda on Tue Oct 8 03:03:59 2019 # # Accessible filesystems, by reference, are maintained under '/dev/disk' # See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info # UUID=9ac9aa76-6847-4e8c-81ff-53bf2c0bc367 / xfs defaults 0 0 UUID=a212255f-464e-46b4-99dc-2ad8d5baffdf /boot xfs defaults 0 0 UUID=f1e4ce93-4d16-464c-86de-b391167f662b /home xfs defaults 0 0 UUID=dd1566ef-1ea5-4d43-a525-ce79ffaef956 swap swap defaults 0 0 tmpfs /dev/shm tmpfs rw,exec 0 0 /dev/vdb1 /u01 xfs defaults 0 0 修改后: [root@tsdb02 bak]# cat /etc/fstab # # /etc/fstab # Created by anaconda on Tue Oct 8 03:03:59 2019 # # Accessible filesystems, by reference, are maintained under '/dev/disk' # See man pages fstab(5), findfs(8), mount(8) and/or blkid(8) for more info # UUID=9ac9aa76-6847-4e8c-81ff-53bf2c0bc367 / xfs defaults 0 0 UUID=a212255f-464e-46b4-99dc-2ad8d5baffdf /boot xfs defaults 0 0 UUID=f1e4ce93-4d16-464c-86de-b391167f662b /home xfs defaults 0 0 UUID=dd1566ef-1ea5-4d43-a525-ce79ffaef956 swap swap defaults 0 0 tmpfs /dev/shm tmpfs rw,exec 0 0 #/dev/vdb1 /u01 xfs defaults 0 0 /dev/vg2020/LV20 /u01 xfs defaults 0 0 先确认/etc/fstab里面是否已经修改为最新的,不然重启会报错 #reboot 7.将u01.tar.gz解压到/u01下 节点1 #tar -xzvf u01.tar.gz -C /u01/ #mv /u01/u01/* . 节点2 [root@tsdb02 bak]# tar -xzvf u01.tar.gz -C /u01/ 8.启动数据库集群 这里遇到一个问题,就是在节点2压缩拷贝的时候,没有压缩完,结果cancle了,后面解压还原回去的时候少了lsInventory目录还有少了审计目录。 解决方法:root用户将节点1的lsInventory目录打包发送到节点2对应路径解压还原,根据spfile.ora文件创建审计目录问题解决。

CentOS 7.6 标准分区修改为lvm

来源:这里教程网

时间:2026-03-03 16:12:50

作者:

编辑推荐:

下一篇:

相关推荐

-

雷神推出 MIX PRO II 迷你主机:基于 Ultra 200H,玻璃上盖 + ARGB 灯效

2 月 9 日消息,雷神 (THUNDEROBOT) 现已宣布推出基于英

-

制造商 Musnap 推出彩色墨水屏电纸书 Ocean C:支持手写笔、第三方安卓应用

2 月 10 日消息,制造商 Musnap 现已在海外推出一款 Oce

热文推荐

- Oracle 恶意攻击问题分析和解决(一)

Oracle 恶意攻击问题分析和解决(一)

26-03-03 - 记一次12c pdb打补丁失败处理过程

记一次12c pdb打补丁失败处理过程

26-03-03 - 关于Heap中的一些概念

关于Heap中的一些概念

26-03-03 - 如何提高抖音直播间人气

如何提高抖音直播间人气

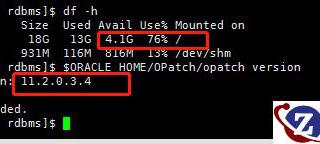

26-03-03 - oracle rac 打PSU补丁30805461两个问题(Java版本及空间不足导致失败)

- unlimited tablespace权限的授予和回收

unlimited tablespace权限的授予和回收

26-03-03 - obj$等数据字典表的统计信息采集机制一些整理

obj$等数据字典表的统计信息采集机制一些整理

26-03-03 - ORA-0155 表空间的添加、修改、删除

ORA-0155 表空间的添加、修改、删除

26-03-03 - Oracle EMCC 12c emcli命令行工具安装以及使用介绍

Oracle EMCC 12c emcli命令行工具安装以及使用介绍

26-03-03 - 三星显示 MWC 进行高尔夫推杆、篮球投篮测试,展示可折叠 OLED 耐用性