前面文章已经介绍过ogg的安装部署,这里不做赘述,只针对kafka集群是kerberos认证模式的情况下,挖掘,传输,和应用进程的配置做介绍,其他安装步骤可参考前面的文档。

挖掘进程配置(DG端挖掘)

table oggtest.test1;

传输进程配置

重点区别在于目标端应用进程的相关配置,如下:

kafka_r2kafka.props配置:gg.handlerlist=kafkahandlergg.handler.kafkahandler.type=kafkagg.handler.kafkahandler.KafkaProducerConfigFile= kafka_handler/kafka_producer.propertiesgg.handler.kafkahandler.topicMappingTemplate=oggtest_${tableName}gg.handler.kafkahandler.keyMappingTemplate=${primaryKeys}gg.handler.kafkahandler.format=jsongg.handler.kafkahandler.format.includePrimaryKeys=truegg.handler.kafkahandler.mode=opgg.handler.kafkahandler.BlockingSend=falsegg.classpath=dirprm/:/usr/local/TDH-Client/kafka/libs*:/ogg:/ogg/lib/*jvm.bootoptions=-Xmx512m -Xms512m -Djava.class.path=./ggjava/ggjava.jar -Dlog4j.configuration=log4j.properties - Djava.security.auth.login.config=/etc/eventstore1/conf/jaas.conf -Djava.security.krb5.conf=/etc/krb5.conf /etc/eventstore1/conf/jaas.con f 配置: KafkaClient { com.sun.security.auth.module.Krb5LoginModule required useKeyTab=true keyTab="/etc/eventstore1/conf/kafka.keytab" storeKey=true useTicketCache=false principal="kafka@TDH"; }; kafka_producer.properties 配置:bootstrap.servers=tdh02:9092, tdh03:9092, tdh04:9092, tdh05:9092, tdh07:9092, tdh08:9092, tdh09:9092, tdh10:9092acks=-1compression.type=lz4buffer.memory=134217728reconnect.backoff.ms=20000retries=10retry.backoff.ms=20000max.in.flight.requests.per.connection=1value.serializer=org.apache.kafka.common.serialization.ByteArraySerializerkey.serializer=org.apache.kafka.common.serialization.ByteArraySerializerbatch.size=524288linger.ms=1000request.timeout.ms=60000send.buffer.bytes=5242880 security.protocol=SASL_PLAINTEXT sasl.kerberos.service.name=kafka sasl.mechanism=GSSAPI 标红部分为该模式下需要重点关注和配置的。

编辑推荐:

- 使用OGG将oracle增量数据实时同步至kafka(SASL/GSSAPI(Kerberos)认证)03-03

- 【MATLAB源码】5G:Low-PAPR序列仿真与交互平台03-03

- 最新!2026北京年会策划/会议策划/活动策划首选:北京飞鸟创意文化传媒03-03

- Oracle数据脱敏神器:DBMS_REDACT保护你的敏感数据03-03

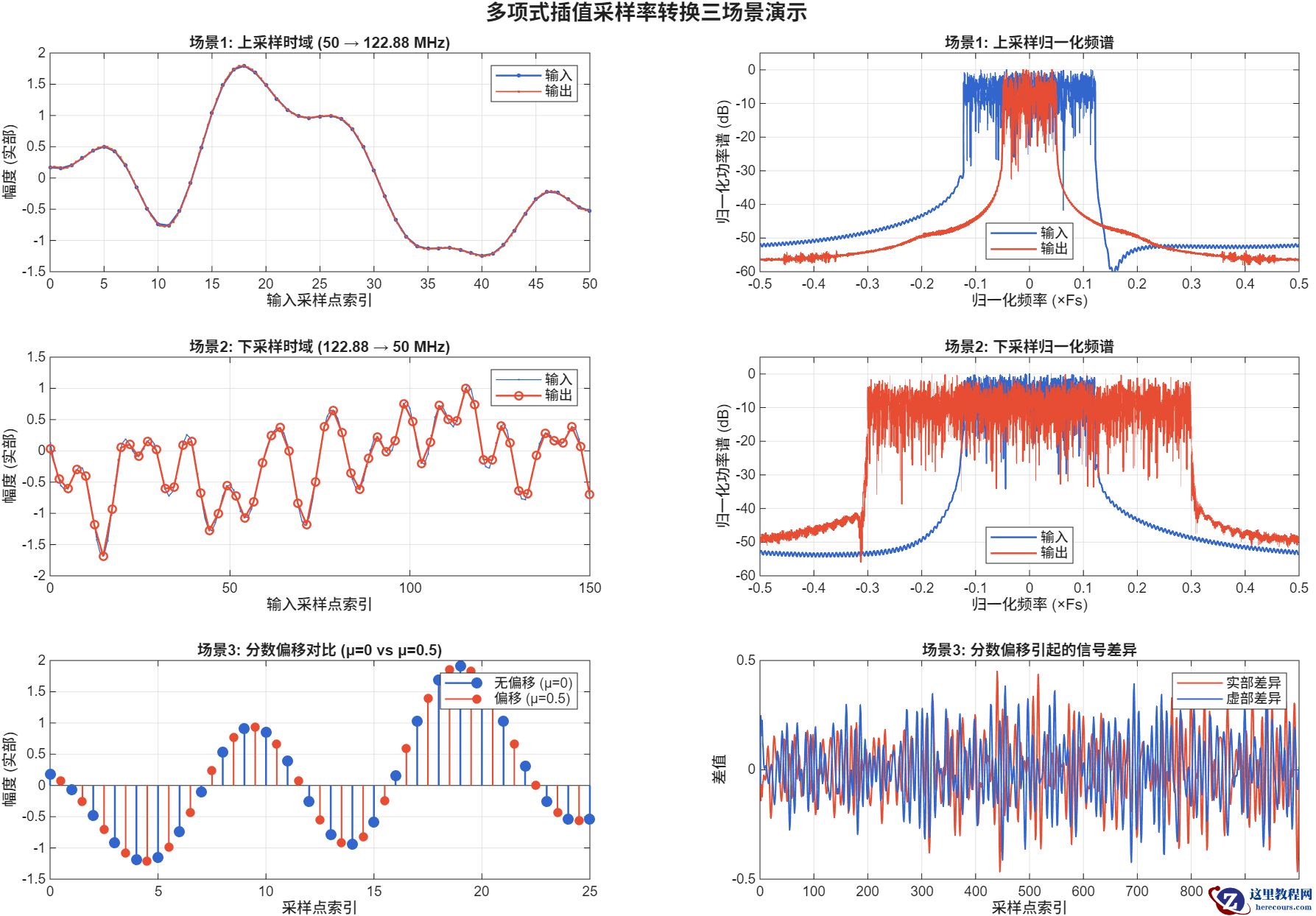

- 【MATLAB源码】5G/6G:Farrow结构采样率转换器03-03

- 成都卓勤法律咨询--家庭财务健康个性化服务定制03-03

- 定制化债务方案,郑州法枢汇法律助你告别负债焦虑03-03

- Oracle 23C实时统计:告别执行计划“盲猜”,让性能优化智能化03-03

相关推荐

-

雷神推出 MIX PRO II 迷你主机:基于 Ultra 200H,玻璃上盖 + ARGB 灯效

2 月 9 日消息,雷神 (THUNDEROBOT) 现已宣布推出基于英

-

制造商 Musnap 推出彩色墨水屏电纸书 Ocean C:支持手写笔、第三方安卓应用

2 月 10 日消息,制造商 Musnap 现已在海外推出一款 Oce

热文推荐

- 【MATLAB源码】5G:Low-PAPR序列仿真与交互平台

【MATLAB源码】5G:Low-PAPR序列仿真与交互平台

26-03-03 - 最新!2026北京年会策划/会议策划/活动策划首选:北京飞鸟创意文化传媒

最新!2026北京年会策划/会议策划/活动策划首选:北京飞鸟创意文化传媒

26-03-03 - 【MATLAB源码】5G/6G:Farrow结构采样率转换器

【MATLAB源码】5G/6G:Farrow结构采样率转换器

26-03-03 - 告别镜像拉取困境:毫秒镜像以“正规军”姿态重塑国内Docker加速生态

告别镜像拉取困境:毫秒镜像以“正规军”姿态重塑国内Docker加速生态

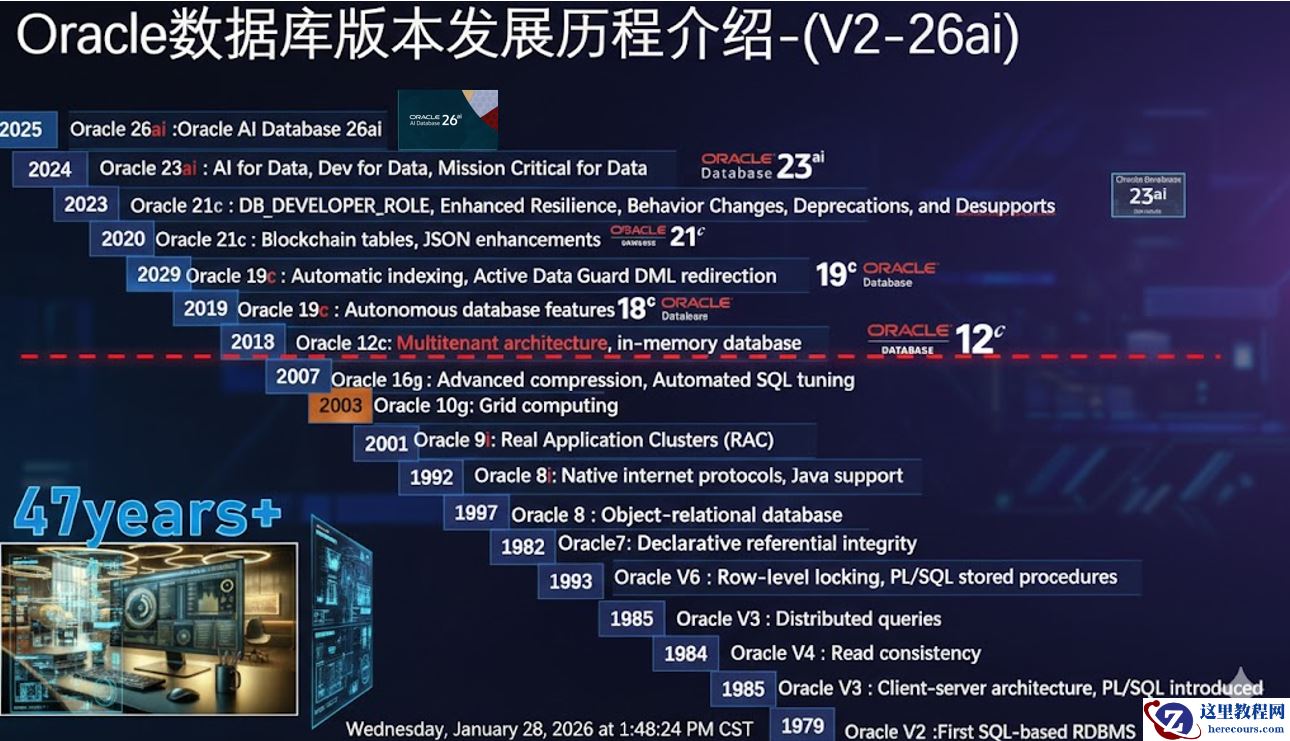

26-03-03 - 数据库管理-第404期 Oracle AI DB 23.26.1新特性一览(20260128)

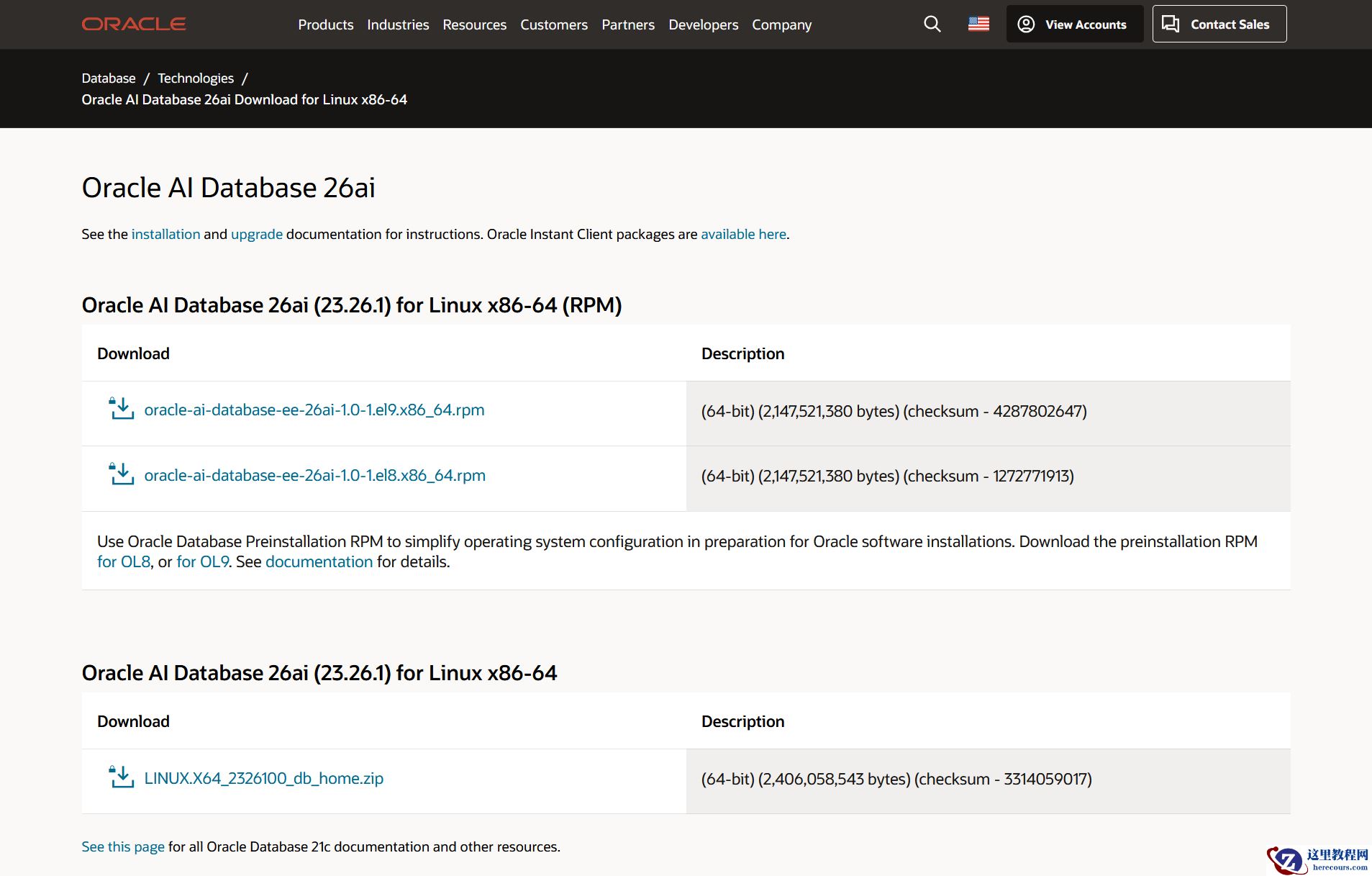

- 2026年春节前夕 Oracle AI Database 26ai linux x86-64平台本地部署版本如期正式发布

- 苦等三年!Oracle AI Database 26ai本地服务器版终于来了

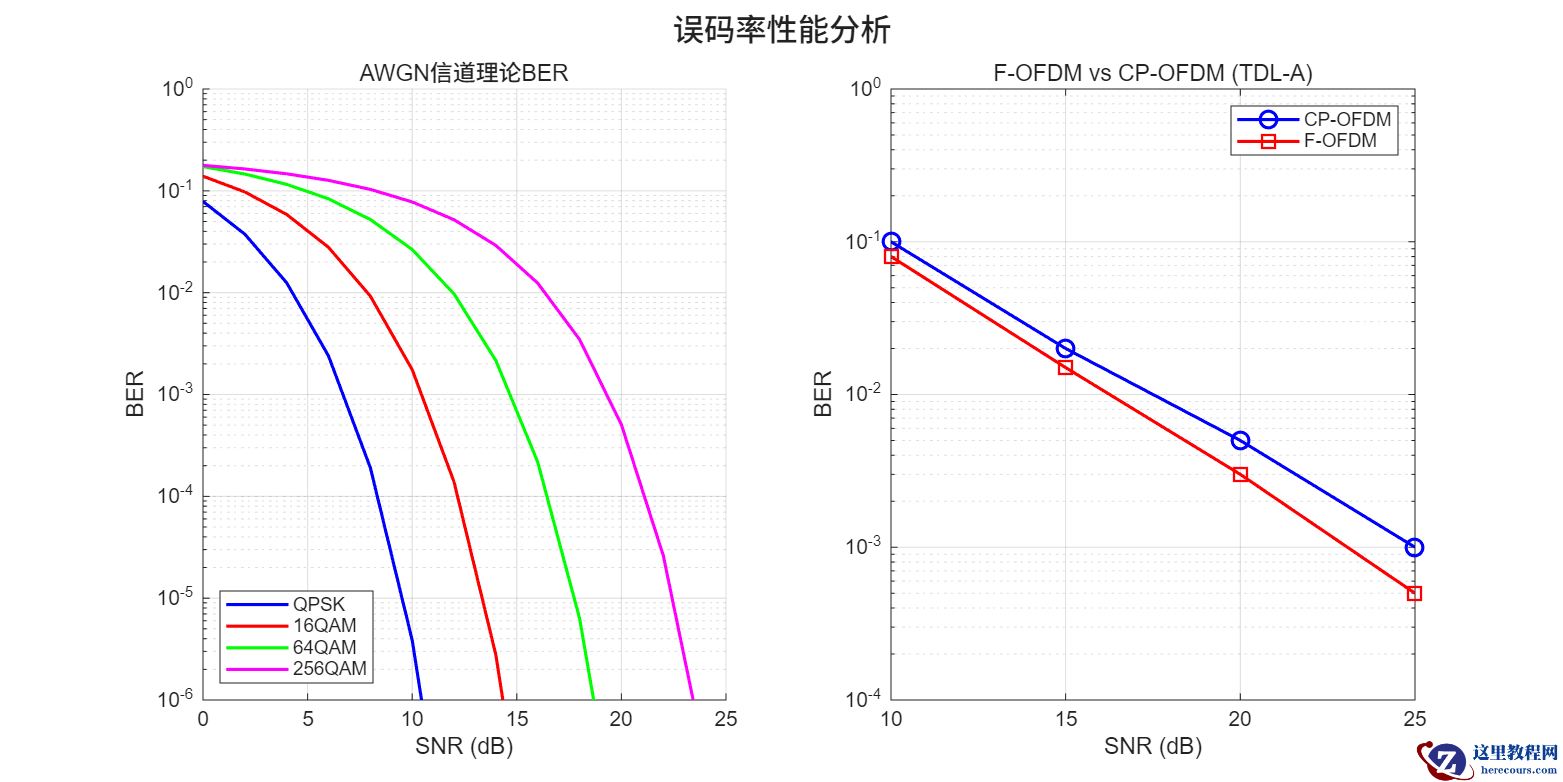

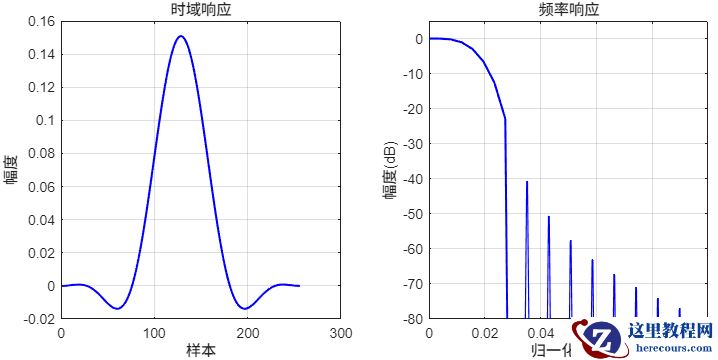

- 【MATLAB源码】F-OFDM:链路级仿真平台

【MATLAB源码】F-OFDM:链路级仿真平台

26-03-03 - 【MATLAB源码】FBMC:链路级仿真平台

【MATLAB源码】FBMC:链路级仿真平台

26-03-03 - Oracle的抗量子加密,为你的数据竖起“双重保险”

Oracle的抗量子加密,为你的数据竖起“双重保险”

26-03-03